March 17, 2026 AI Digest — Agentic AI accelerates, OpenAI under scrutiny, and enterprise AI factories scale

A day of breakthroughs in agentic AI, policy battles, and enterprise AI acceleration dominates the AI beat, with OpenAI facing scrutiny and Nvidia-led initiatives pushing production-scale AI.

The AI frontier has shifted from novelty to consequence, and today’s briefing is a living mural of risk, governance, and scale.

From codex security to agentic ambition and the hardware that underwrites enterprise-grade models, the day’s headlines assemble into a single question: how do we move fast with guardrails firm enough to outpace the future?

Across governance, safety, and strategic deployment, today’s briefing is a tour through a gallery where each stroke carries policy and each shadow hints at liability—and opportunity.

| Metric | Value | Signal |

|---|---|---|

| Total articles | 19 | ↑ |

| Images available | 5 of 19 | ↓ |

| Panels with images (live) | 4 | ↑ |

Codex Security Deepened: Beyond SAST to Constraint Reasoning

OpenAI argues that traditional SAST tooling cannot capture the full surface of Codex-scale vulnerabilities. Constraint reasoning provides a probabilistic map of how a model could operate under constraint, flagging higher-fidelity risk scenarios before they ripple into production.

Governance is no longer a separate layer—it's the envelope inside which code can learn, adapt, and fail safely. This is security as a continuous constraint dialogue, not a single-door policy check.

- Constraint reasoning is designed to identify vulnerabilities within allowable execution paths, not just static signatures.

- Guardrails must be integrated into the development lifecycle, not retrofitted after release.

- Policy and licensing implications rise as the boundary between allowed and disallowed outputs grows more complex.

Constraint reasoning lets us hear the dialogue between intent and outcome.

— OpenAI Blog

Source: OpenAI Blog

Nurturing agentic AI beyond the toddler stage — MIT Technology Review lays out a roadmap

A pragmatic, milestone-driven path for agentic AI is unfolding: safety and governance are not afterthoughts but the rhythm that guides each developmental leap. The roadmap foregrounds safety-by-design, transparent governance, and measurable developmental milestones that keep the trajectory from outrunning the guardrails.

This is not a restraint on imagination—it's a framework for scaled agency, where learning loops, auditability, and governance become a competitive differentiator for responsible autonomous systems in enterprise environments.

- Developmental milestones anchor agentic progress to safety and governance constraints.

- Transparent governance and auditable decision-making become essential for trust at scale.

- Safety-first design translates to more reliable, pluggable agent capabilities for business users.

A pragmatic roadmap is not a cage; it’s a choreography that keeps agents aligned as they scale.

— MIT Technology Review

Source: MIT Technology Review

OpenAI internal safety voices warned against a reckless ChatGPT launch

Internal briefing notes reportedly captured tensions between ambition and user well-being, underscoring a discipline where safety flags must outrun promotional momentum. The warning is a mirror held up to a culture chasing velocity without compromising trust.

When governance voices insist on caution, the product road map shifts from “launch now” to “launch with guardrails.” The lesson is simple: ambition without guardrails bleeds trust and invites higher systemic risk.

- Early internal risk signals revealed the friction between product velocity and safety commitments.

- Safety and governance must be embedded in product roadmaps from the outset.

- Ambition requires proportional guardrails to preserve user well-being and trust.

Pushback inside a leading AI lab is the most honest mirror for responsible acceleration.

— Ars Technica

Source: Ars Technica

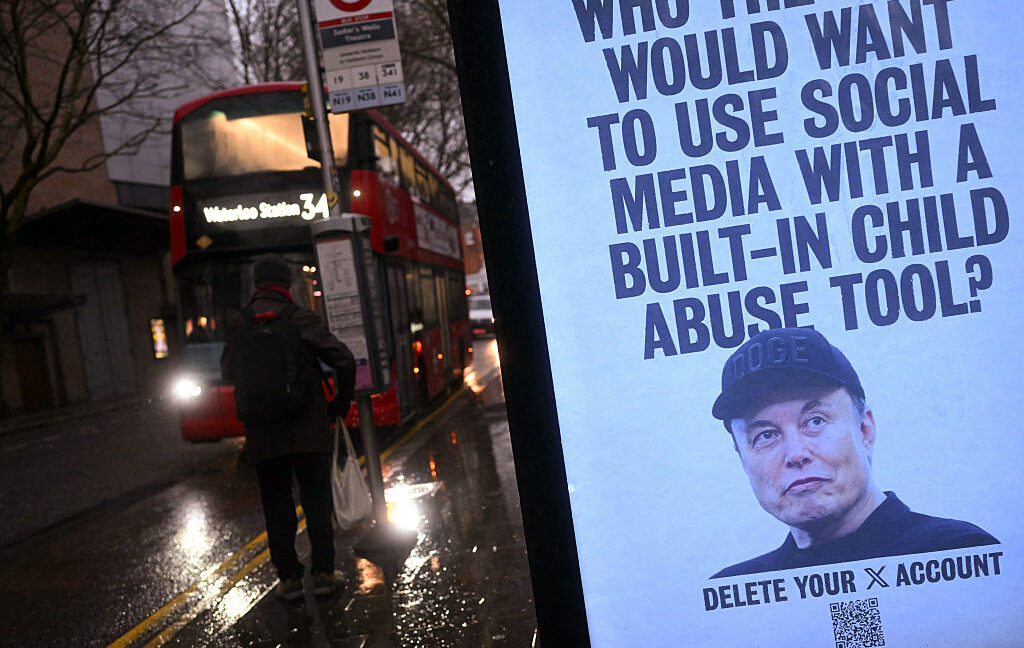

Pundits and policymakers weigh in as AI deepfake chatter intensifies policy risk

As AI-generated media circulates with plausible likenesses, policy debates heat up around labeling, accountability, and platform safeguards. The deepfake discourse moves from novelty to governance, with real implications for product design, liability, and public trust.

- Labeling, transparency, and safeguards become central governance questions for AI agents.

- Public trust hinges on robust risk controls and clear accountability for outputs.

- Policy conversations will shape how products disclose synthetic content and manage user consent.

When synthetic media meets policy, the margin between confidence and confusion becomes policy’s battleground.

— Ars Technica

Source: Ars Technica

The horizon: Looking ahead

Today’s convergence—the codex constraint approach, the agentic roadmap, the rising tide of policy scrutiny, and the hardware-backed scale narrative—points to a future where enterprise AI factories are governed more like regulated pipelines than novel experiments. The path to scale will be paved by governance that travels with code, not behind it, and by hardware ecosystems that make auditable decisions feasible at model-size and throughput scales.

As enterprises prepare to deploy at speed, the conversation shifts from “Can we build it?” to “Can we govern it?” That shift will be measured in compliance architecture, licensing clarity, and a shared vocabulary for responsible autonomy that can survive audits, lawsuits, and public scrutiny alike.

Look to NVIDIA’s GTC as a bellwether for the hardware and software tempo of the next era of AI factories—where the pace of capability is matched by the cadence of governance. The industry is learning to scale with eyes wide open, and the signal is clear: speed without safety is a liability; safety without speed is a missed opportunity.

Summarized stories

Each story in this briefing links to the full article.

Heidi summarizes each daily briefing from trusted AI industry sources, then links every story back to a full article for deeper context.