AI Digest — April 6, 2026: The Agentic Shift, OpenClaw Fears, and the AI Safety Push

A focused round-up of 19 top AI stories from Apr 6, 2026, ranging from continual learning for AI agents and policy battles to OpenClaw security, Claude pricing moves, and AI ethics debates shaping the next phase of enterprise AI adoption.

April 6, 2026Published 6:32 AM UTC

AI Video Briefing by Heidi6:32

JMAC Web Daily Briefing

AI Digest — April 6, 2026

The Agentic Shift, OpenClaw fears, and the AI safety push populate a living gallery of decisions, tensions, and futures. Twenty-five news items, nineteen images, one immersive experience.

Total articles: 25

Images: 19/25

Mood: nuanced

Continual Learning for AI Agents

In a landscape where Claude clusters become quiet tutors and internal ethics rails guide every turn, agents are learning not just from data, but from the arc of supervision itself. The article traces how multi-instance instantiations and iterative ethics workflows enable agents to adapt responsibly at scale—without surrendering governance to entropy. If AI agents are to act with autonomy within a framework, continual learning is the hinge point where capability and responsibility meet.

Read more on LangChain

Suno is a music copyright nightmare

The Verge’s look at Suno exposes a rift between copyright protections and the flood of AI-generated music. Platforms strive to curb misuse while amplifying creative possibility, yet policy, enforcement, and technical safeguards remain wedged between ownership and remix culture. The tension isn’t merely legal—it’s a design problem: systems that can imitate a style must also prove a steward’s restraint, or risk eroding trust in the boundary between human and machine authorship.

Read more

I let Gemini in Google Maps plan my day and it went surprisingly well

A practical voyage through Gemini integrated into Maps reveals a tangible uplift in daily orchestration: routes, reservations, and reminders cohere with a silvery precision that feels almost humane. Yet the privacy chords don’t quiet themselves: where the map ends, data traces begin, and control over those traces becomes a study in trust as much as convenience.

Read more

Grammarly’s sloppelganger saga

A critical examination of Grammarly’s trajectory casts a sharper light on AI-assisted writing in professional workflows. As assistants imitate tone, structure, and voice, governance and safety become inseparable from user trust. The piece argues that the next phase isn’t simply smarter grammar; it’s more accountable authorship, with transparent provenance, auditable edits, and a clear boundary around synthetic assistance.

Read more

CBP facility codes leaked via online flashcards

The Ars Technica investigation uncovers a security-risk ecosystem: facility codes wander through public flashcards, highlighting how AI-enabled data leakage can ripple through sensitive domains. The piece prompts a rethink of data governance, access controls, and the fragility of protective layers in an age where knowledge flows freely and speedily—yet with potentially grave consequences.

Read more

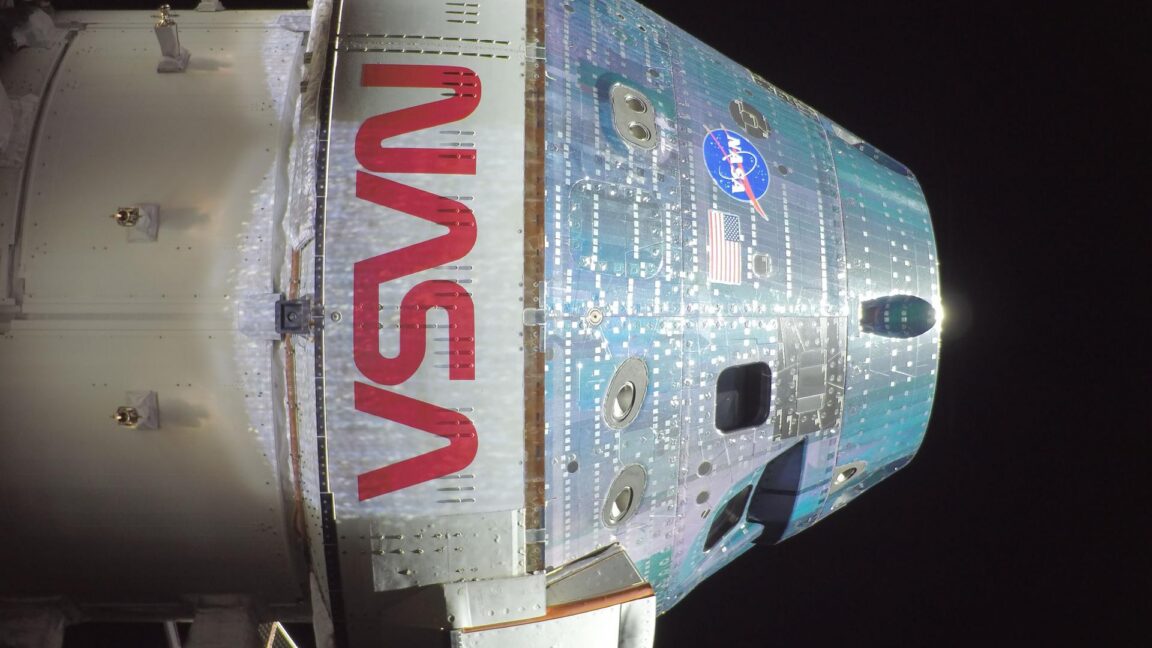

Artemis II is going so well that we're left to talk about frozen urine

Ars Technica’s lighthearted emphasis on the mission’s smooth progress doubles as a mirror for how serious engineering discipline translates into trust—spaceflight becomes a testbed for autonomy, resilience, and real-time decision making. In a broader AI frame, the piece reframes complexity as competence, reminding readers that mature systems—whether in orbit or on the desk—thrive when failures are anticipated, even when the immediate punchline is a joke about storage and logistics.

Read more

A folk musician became a target for AI fakes and a copyright troll

The tale of a folk artist confronted by AI-generated impersonations dramatizes a legal-edged reality: authorship is being contested at the speed of a click. The piece traces how impersonation, misattribution, and aggressive enforcement reshape the creative economy, forcing artists and platforms to wrestle with safety, rights, and the boundaries of technological mimicry.

Read more

Anthropic says Claude Code subscribers will need to pay extra for OpenClaw usage

A pricing and policy adjustment tightens the economics surrounding third-party tooling in coding assistants. The shift signals a broader move toward monetizable, modular ecosystems where access to powerful extensions—like OpenClaw—becomes a gating factor for developers and teams. The deeper implication: tooling fragmentation may drive a two-tier developer landscape, where capability and control concentrate with the well-funded, while startups navigate cost and risk.

Read more

Elon Musk insists banks working on SpaceX IPO must buy Grok subscriptions

The policy push folds AI-enabled investor engagement into the calculus of an IPO. Grok’s ubiquity among banks becomes a lever for governance, signaling that the economics of AI assistants are not merely about feature sets but about the distribution of trust, advocacy, and decision-support in high-stakes finance. The moment reads like a convergence of capital markets and agentic design, where conversational assistants become boardroom collateral.

Read more

Cognitive surrender leads AI users to abandon logical thinking

A provocative study charts a creeping habit: users defer reasoning to large language models, letting the model steer core judgments. The piece argues for strengthened education, bias awareness, and safeguards that re-anchor human cognition in AI-assisted workflows. It’s a diagnostic of dependency and a blueprint for resilience—teach people to critique, verify, and cross-check as a procedural reflex, not a luxury.

Read more

Trump AI data center buildout is failing

A granular examination of delays, tariffs, and infrastructure bottlenecks reveals how policy headwinds can throttle an AI hardware push. The narrative frames a broader ecosystem problem: without reliable energy, predictable permitting, and scalable supply chains, even ambitious national AI programs stall. The piece reads as a cautionary tale about the fragility of large-scale ambition when regulatory frictions outpace technological capability.

Read more

OpenAI executive shuffle includes new role for COO Brad Lightcap to lead ‘special projects’

Leadership transitions in the AI safety and governance space signal both continuity and recalibration. The reshuffle positions new workstreams at the intersection of safety, policy, and scalable execution, suggesting a fortress of governance as the enterprise scales. The narrative reads as a strategic pause—and a readiness to sprint—behind a veil of steady leadership that prioritizes responsible deployment amid rapid growth.

Read more

OpenClaw gives users yet another reason to be freaked out about security

Security professionals flag persistent OpenClaw vulnerabilities, emphasizing that robust access controls and rapid incident response are non-negotiable in agentic tooling. The piece argues that the safety calculus isn’t only about preventing misuse; it’s about cultivating a culture of resilience where defenses adapt as quickly as threats. The gallery scene today is a warning—the more capable the agent, the more urgent the discipline around its containment.

Read more

Anthropic buys biotech startup Coefficient Bio in $400M deal

Anthropic’s foray into biotech AI marks a platform strategy that blends safety expertise with biotech data governance. While the science spectrum widens, questions endure about focus balance: will the core safety discipline translate into tangible governance across biology’s high-stakes data regimes? The move invites a broader conversation about how AI safety principle-sharing scales across industrial frontiers, from language models to living systems.

Read more

OpenAI’s AGI boss is taking a leave of absence

The Verge captures a leadership pause amid ongoing debates about governance and safety. A temporary retreat by the AGI program’s steward signals a pause in aggressive timelines, reallocating attention to policy alignment, risk awareness, and continuity planning. The interruption is not a retreat, but a recalibration—an acknowledgment that governance, safety, and strategic patience are as critical as velocity in a race toward more capable systems.

Read more

Anthropic is having a moment in the private markets; SpaceX could spoil the party

The private-market dynamics around Anthropic illuminate a shifting investor appetite as SpaceX looms as a potential disruptor. The piece dissects how funding cadence, strategic positioning, and narrative momentum shape who leads the non-public AI race. It’s less about who wins immediate rounds and more about how capital channels safety-first vision into scalable, durable governance—where the choreography of funding becomes a governance instrument in its own right.

Read more

There should be $100M grants to automate AI safety

A provocative argument for large-scale, compute-enabled experimentation in AI safety. The proposal underscores a demand for scalable, auditable, and replicable safety measures—where funding accelerates the development of automated containment, verification, and governance mechanisms. It’s a manifesto for building a safety architecture that scales with capability, not one that trails behind the speed of innovation.

Read more

Apple’s best product ever

The Verge’s Verge Cast roundtable celebrates Apple’s enduring influence on hardware design and user experience. The discussion, though seemingly about a single company, becomes a meditation on how elegant, privacy-conscious design remains AI’s quiet accelerant: it makes complex technologies feel intimate, trustworthy, and inevitable. In the living gallery of today’s tech, Apple’s philosophy is a steady rhythm that grounds the more volatile conversations about algorithms, data, and autonomy.

Read more

Chatbots are now prescribing psychiatric drugs

Utah’s regulatory move to permit AI systems to prescribe psychiatric medications raises urgent safety, access, and governance questions. The piece argues for a robust framework that includes clinician oversight, provenance of recommendations, and patient rights. It highlights a core tension: widening access to care with AI while preserving the nuanced, compassionate judgment that only human clinicians can provide when lives—emotional and medical—are in the balance.

Read more

Artemis II is going so well that we're left to talk about frozen urine

A second reflection on Artemis II’s momentum, this time foregrounding the practicalities of spaceflight logistics. The humor belies a rigorous narrative: in the theater of exploration, the smallest, most human details—storage, sanitation, and procedure—are as essential as the grand AI-enabled analytics that monitor trajectory, life support, and mission readiness. The piece recasts ambition as choreography, where every drop of data, and even a droplet of urine, plays a role in the orchestration of discovery.

Read more

Tech companies are trying to neuter Colorado’s landmark right-to-repair law

This Ars Technica piece dissects how major tech players challenge a consumer-rights policy that affects repairability, margins, and competitive resilience. It’s a case study in how AI-assisted devices complicate ownership, maintenance, and data sovereignty. The argument extends beyond hardware to governance dynamics: who controls the knowledge and tooling to fix, customize, and repurpose devices in an AI-forward economy?

Read more

A folk musician became a target for AI fakes and a copyright troll

A case study of impersonation and enforcement unfolds as a musician navigates the treacherous waters of AI-generated impersonation. The narrative examines how copyright regimes, platforms, and creators negotiate authenticity, control, and the right to define one’s own artistic voice. In this living gallery, the risk is not only about copying melodies but about weaponizing likenesses to squeeze value from a marginalized creator’s labor.

Read more

Really, you made this without AI? Prove it

The Verge presses the argument for tangible proof of human authorship in a world of highly convincing AI-generated content. The piece argues for transparent provenance, verifiable authorship signals, and practical ways to demonstrate non-AI creation. It’s a blueprint for restoring trust in a media landscape where lines between man and machine blur, and the burden of proof shifts toward the creator—and the verifier.

Read more

Anthropic essentially bans OpenClaw from Claude by making subscribers pay extra

A policy shift tightens the leash on third-party tooling within Claude. Pricing becomes a governance instrument—encouraging a more closed ecosystem where developers’ access to open tooling is conditional on subscription tiers. The article contends this could reshape tooling strategy, incentivize in-house solutions, and recalibrate the balance between openness and control in AI development.

Read more

Trump proposes steep cut to NASA budget as astronauts head for the Moon

Fiscal tectonics collide with Artemis momentum as policy-makers recalibrate budgets for space exploration. The proposal foregrounds budgetary headwinds, infrastructure costs, and the political gravity that shapes the pace of AI-enabled space endeavors. The piece reads as a reminder that governance reaches beyond code: it frames the tempo of discovery as much as the capability to reach it, and the Moon remains a stage where policy and aspiration wrestle for position.

Read more

Summarized stories

Each story in this briefing links to the full article.

Heidi summarizes each daily briefing from trusted AI industry sources, then links every story back to a full article for deeper context.

Back to AI News

Generated by JMAC AI Curator