April 14, 2026 AI News Digest — OpenAI SDKs, enterprise agenting, and the new era of trusted AI governance

A balanced mix of OpenAI SDK upgrades, enterprise agenting breakthroughs, and governance-focused coverage drives this day’s AI discourse, with Google Gemini apps, industry-scale AI governance, and market-ready AI tools taking center stage.

April 14, 2026 — AI News Digest

OpenAI SDKs, enterprise agenting, and the new era of trusted AI governance

A day of convergences: sandboxed agents decoupled from risk, trusted cyber access expanding with Defender-grade AI, and a governance blueprint that binds speed to safety. This briefing walks through a gallery of breakthroughs, tensions, and opportunities rippling through OpenAI, enterprise platforms, and global policy.

Google's Chrome now stores Gemini prompts as “Skills,” turning fleeting sessions into durable workflows across multiple pages. The browser becomes a stage for persistence: a user’s intent, encoded as reusable routines, ready to be summoned with a keystroke or a gesture.

The Verge reports on efforts to harden watermark integrity against reverse engineering. The conversation is less about glyphs on an image and more about proving provenance in a world of AI-generated media—trust, traceability, and the social contract that comes with synthetic content.

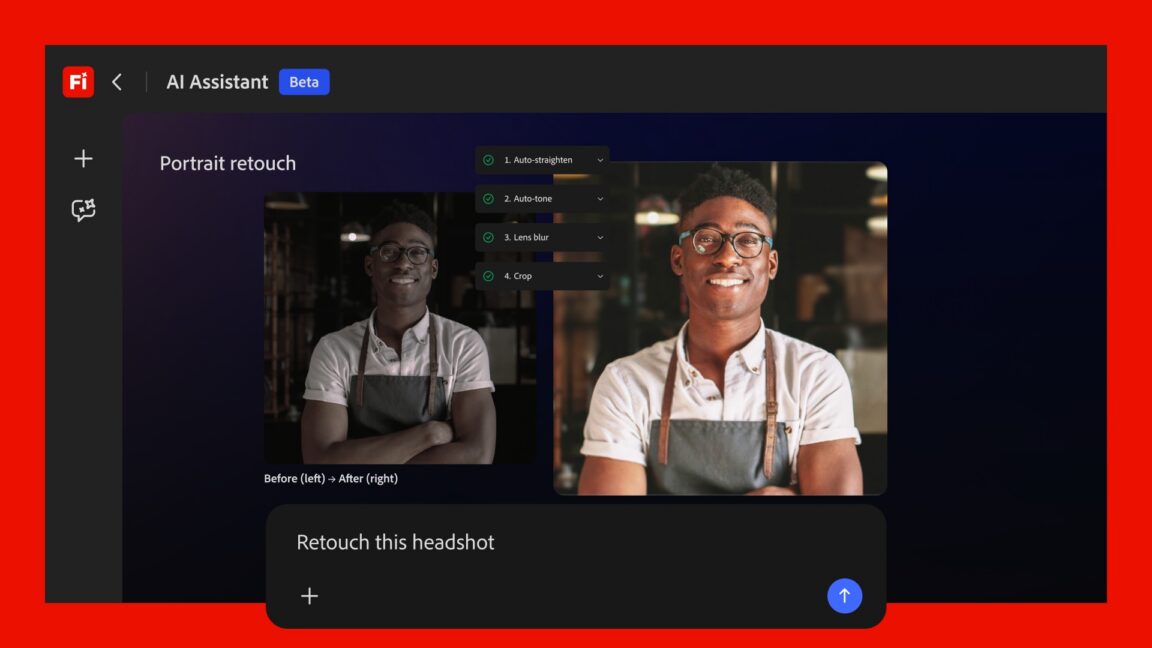

Adobe’s Creative Cloud embraces Claude Code-esque tooling, signaling a shift toward code-aware AI helpers for creatives. The boundary between prompt-driven artistry and programmable artistry blurs as designers gain code-driven precision, versioned pipelines, and a faster feedback loop for creative experiments.

Google’s Gemini makes a home on the desktop, offering a native Mac experience that folds AI-assisted workflows into the files and apps professionals already rely on. The result is less about switching ecosystems and more about a seamless intelligence layer that travels with the user—an orchestration layer for productivity.

Article 1 — OpenAI advances Agents SDK with sandbox execution and long running capabilities

In the quiet hum of a data center, where a thousand little decisions drift like code-dust between shelves of servers, OpenAI unveils the next evolution of its Agents Software Development Kit. The sandbox is now native, not an afterthought—an isolation chamber that makes risky curiosity safer by design. Long-running agents, once fragile experiments, now carry a model-native harness that threads across files and tools with disciplined persistence.

The significance isn’t merely technical. It’s a governance proposition: you can build agents that operate with strict sandboxed boundaries, logging each decision, and self-correcting their course if they misstep. Enterprises glimpse a future where automation extends its reach without surrendering control. But with capability comes responsibility: the push toward more capable agents must be matched with auditable governance, robust access controls, and transparent risk assessments as standard behavior, not afterthoughts.

The market responds with measured enthusiasm. Agents that can endure the longue durée of business processes—an onboarding assistant that returns day after day to refine payroll reconciliations, a procurement bot that negotiates within policy constraints, or a risk-aware finance bot that flags anomalies—are moving from curiosity to core capability. Yet the architecture must guard against creeping surface-level trust: assurances must be machine-checkable and policy-driven, not merely product claims.

Tags: OpenAI, Agents, SDK, AI Agents, security. Source: OpenAI Blog.

Article 2 — OpenAI expands trusted cyber access with GPT 5.4 Cyber for defenders

If governance is a hallway, then trusted access is the doorman who doesn’t mistake the quiet for consent. OpenAI’s Trusted Access for Cyber evolves with GPT 5.4 Cyber: a curated, vetted cadre of defenders equipped to stage AI-assisted defenses at scale. The program broadens safeguards as AI-driven cyber operations become the de facto frontline, not a risk calculator tucked away in a lab.

This isn’t mere security theater. It’s a recognition that the speed and reach of AI tools must be matched by rigorous provenance, policy alignment, and auditable trails. For enterprise security teams, the framework offers a reproducible baseline—an assurance that the AI components deployed in critical infrastructure are designed, tested, and monitored with explicit guardrails. The tension remains between agility and control, but the trajectory leans toward a more resilient, trust-forward runway for AI-enabled cyber defense.

The broader implication is a shaping of industry norms: access governance becomes a feature, not an afterthought; responsibility frameworks migrate from spreadsheets to embedded design principles; and defenders, as operators of complex AI ecosystems, gain a clearer map of how to deploy, monitor, and evolve AI agents without losing sight of risk.

Tags: OpenAI, cybersecurity, GPT-5.4-Cyber, trusted access. Source: OpenAI Blog.

Article 3 — Cloudflare teams with OpenAI to empower agentic workflows in Agent Cloud

The edge becomes a storyboard for practical AI: Cloudflare partners with OpenAI to ferry GPT 5.4-derived agents into Agent Cloud, enabling secure, scalable, real-world tasking inside enterprise environments. The promise is not merely a faster bot; it is a disciplined orchestration layer that respects network perimeter, data sovereignty, and the rhythm of business processes.

When agents move across edge and cloud, the design discipline shifts. The risk surface expands—more corridors, more gateways, more systems that must speak a common language of policy and provenance. The opportunity, however, is extraordinary: real-time decision-making embedded in the fabric of corporate operations, from supply chain orchestration to customer service workflows, all under a governance framework that emphasizes auditability and governance-by-design.

Tags: OpenAI, Cloudflare, Agent Cloud, Edge computing. Source: OpenAI Blog.

Article 4 — OpenAI and enterprise SDKs push safer, more capable agents, says TechCrunch

TechCrunch AI’s lens captures a market-wide shift: enterprise SDKs are increasingly framed around governance, safety, and robust operational controls. OpenAI’s updates aren’t just about more capable agents—they’re about safer agents that behave in ways that teams can trust, document, and regulate. It’s the difference between building a car and shipping a self-driving fleet—both powerful, but one with a governance dashboard that stays visible at every turn.

The narrative extends beyond product labels. Enterprises seek a repeatable, auditable playbook: how agents are authorized, how tools are gated, how outcomes are measured, and how red-teaming exercises become standard drills within product cycles. The speech is consistent: you don’t abandon governance for velocity; you align velocity to governance, creating a resilient automation layer that compounds value without compromising safety.

Tags: OpenAI, agents, enterprise, SDK, safety. Source: TechCrunch AI.

Article 5 — Hightouch reaches 100M ARR with AI powered marketer toolkit

The automation economy keeps expanding into marketing, where data-led decisioning becomes a product in itself. Hightouch’s AI agent toolkit is a telling indicator: a move toward monetizable AI-enabled orchestration that translates audience signals into measurable outcomes. The milestone—100 million ARR—signals more than revenue; it signals the arrival of AI-enabled marketer capabilities as a recurring revenue phenomenon.

The ecosystem effect is palpable. Toolchains become end-to-end: data pipelines feed audience models, agents execute campaigns, and governance encodes guardrails around data privacy, attribution, and experimentation. Investors are watching the velocity curve: if the tooling can deliver both precision and compliance at scale, the business model tilts decisively toward sustainable, AI-powered growth.

Tags: AI, marketing, startups, ARR, automation. Source: TechCrunch AI.

Article 6 — LinkedIn data challenges AI as hiring declines continue

The hiring market hesitates at the edge of AI’s ascent, as LinkedIn data complicates the simple narrative that AI is driving a universal hiring slump. Macro factors—rate dynamics, inflation, and sectoral shifts—are the primary accelerants shaping the job market for now. AI is in the mix, but not the sole conductor.

For practitioners, the takeaway is a reminder to diversify signals. AI’s productivity gains can unlock efficiency while the labor market remains a function of broader macroeconomic currents. The call to action is nuanced: deploy AI where it augments human judgment and where the data trail is clean, compliant, and interpretable enough to inform hiring strategies without over-indexing on any single indicator.

Tags: AI, hiring, jobs, LinkedIn. Source: TechCrunch AI.

Article 7 — US-China AI gap narrows, but responsible AI gap lingers, Stanford index finds

Stanford’s 2026 AI Index maps a narrowing leadership gap, yet the responsible AI discipline remains imperfectly harmonized across jurisdictions. The metric of “responsible AI” shifts from a regulatory afterthought to a core capability of AI platforms: governance baked in, auditability standard, and a privacy-by-design posture that can travel across borders. The global landscape grows more complex as capabilities accelerate, and with it the political economy of AI governance tightens around shared norms and enforceable standards.

The implications for enterprises are practical: global deployments must be underpinned by coherent governance mappings that translate local regulations into enterprise playbooks across regions. The tension remains between rapid deployment and responsible practice, a tension that is not going away but must be managed with transparent metrics and verifiable controls.

Tags: AI benchmarks, governance, Stanford HAI, AI Index. Source: AI News.

Article 8 — MIT Technology Review maps 10 things that matter in AI right now

MIT Tech Review distills a rapidly evolving field into ten touchpoints that investors and regulators listen for. The list ranges from foundational capabilities—like robust model governance and safety- engineering practices—to emergent shifts in compute efficiency, model governance, and cross-industry adoption. It’s a compact map to orient capital, policy, and product teams at a moment when the AI surge intersects with capital markets and regulatory scrutiny.

For decision-makers, the report is a prompt to interrogate portfolio risk with a sharper lens: are our pipelines transparent? Do we maintain a robust incident response posture? How do we demonstrate value while curating a safety-first culture across engineering, product, and compliance teams?

Tags: AI, trends, MIT Technology Review, AI10 2026. Source: MIT Technology Review.

Article 9 — The AI safety map grows sharper as Altman attacked, warns industry

The Verge paints a moment of heightened policy sensitivity around leadership, safety, and governance. An incident surrounding Sam Altman ripples through the industry’s risk calculus, sharpening the public conversation about safety, accountability, and the human costs of rapid deployment. The map tilts toward a more vigilant posture: policymakers, researchers, and executives re-aim questions about control, intent, and consequence in AI systems that scale in capability as quickly as they scale in reach.

For practitioners, the takeaway is not about fear but about discipline: clarity on governance lines, traceability of decisions, and the ability to pause or reroute when risk signals emerge. The episode becomes a case study in building trust through visible guardrails, independent review, and transparent dialogue with the public—an essential aspect of turning AI’s promise into durable, societal value.

Tags: OpenAI, policy, safety, governance, Altman. Source: The Verge AI.

Article 10 — Commvault launches AI Protect with undo for cloud AI workloads

The data layer remains the strategic battlefield: Commvault introduces AI Protect, delivering an undo for cloud AI workloads—a governance-first approach to operational control in autonomous software fleets. The ability to reverse, reconcile, and audit automated actions addresses a core fault line: if AI makes decisions, can we safely unwind them when outcomes drift, data quality degrades, or policy constraints are violated?

The development signals a broader shift toward governance abstractions that travel with compute. It’s not about halting automation; it’s about giving teams a reliable, reversible control plane that preserves data integrity, tracks lineage, and enables rapid recovery without compromising velocity. As AI fleets scale across industries, such primitives become not just features but prerequisites for trusted automation.

Tags: AI, governance, data protection, MLOps, cloud. Source: AI News.

Article 11 — Chrome now supports repeatable AI skills across tabs

The browser becomes a platform for continuity in cognitive work. Chrome’s ability to save Gemini prompts as Skills across tabs marks a shift from ephemeral, tab-scoped AI to persistent, browser-wide capability. It’s a quiet revolution in user experience: AI thoughts, actions, and routines become portable across contexts—research, shopping, coding, and collaboration—without losing the thread of intent.

Beyond convenience, the development raises questions about ownership of these AI skills: who authored the workflows, how are they shared, and how do organizations govern the reuse of automation logic across teams? The answer lies in the same place governance usually begins: policy-enabled tooling, auditable provenance, and a culture that prizes reproducibility as a feature, not a afterthought.

Tags: Chrome, Gemini, skills, Google, browser. Source: The Verge AI.

Article 12 — Google's SynthID watermarking system faces reverse engineering questions

Watermarking AI outputs sits at the intersection of art, science, and law. The Verge reports on ongoing debates around SynthID’s resilience in the face of reverse engineering, a reminder that even the most precise provenance marks must withstand adversarial scrutiny if we expect media to be trusted at scale. The debate is less about a single system and more about a framework: how to guarantee authenticity without enabling misuse, how to balance openness with integrity, and how to ensure watermarking survives transformative modifications.

For practitioners, the takeaway is a call to design watermarking as a lifecycle capability—embedded in data pipelines, audited at each stage of transformation, and complemented by policy guardrails that define acceptable uses and remedies when provenance is questioned. The market’s appetite for trust will reward those who prove provenance can endure the most challenging permutations of AI-generated content.

Tags: AI watermarking, SynthID, provenance, trust. Source: The Verge AI.

Article 13 — Google rolls native Gemini app for Mac adds desktop AI helper

A native Gemini app for Mac makes AI a constant companion on the desktop, embedded within the ecosystem professionals already inhabit. The desktop AI helper promises a tighter feedback loop: file creation, content generation, and data analysis become more immediate, more personal, and more context-aware. The result is a continuity of cognitive labor across devices and environments, a seamless weave of AI-enabled productivity into daily work.

Yet with this pervasiveness comes responsibility. Desktop AI tools intensify expectations for privacy protections, clear consent for data use, and robust opt-out options. In response, developers will need to pair device-level controls with enterprise governance frameworks—ensuring that personal workflows do not become corporate data liabilities and that users retain lucid visibility into how AI handles their content.

Tags: Gemini, Google, Mac, desktop AI. Source: TechCrunch.

Article 14 — The Verge covers Claude Code like shifts as Adobe envisions AI editing

The creative stack is in flux. Adobe’s Claude Code-esque tools move into Creative Cloud, signaling a future where code-aware AI editing becomes a core capability for creatives. The ecosystem shifts from prompting to programmable pipelines: editors, designers, and developers collaborate with AI at a level that blurs the line between manual craft and algorithmic assistance.

The political economy of artistry is changing too. Standards for reproducibility, licensing, and attribution are no longer abstract concerns but operational requirements. As AI editing becomes a part of the professional toolkit, teams will demand deep integration with versioning, provenance, and legal coverage to ensure that creative authority remains with humans who steer the process.

Tags: Adobe, Claude Code, AI editing, creativity. Source: The Verge AI.

Article 15 — Hyundai expands into robotics and physical AI systems

The last frame of today’s gallery presents Hyundai’s pivot into robotics and physical AI systems. The discipline expands from software to the material world: AI agents coordinating autonomous machines that operate in real environments, from manufacturing floors to service robots and beyond. It’s a tangible manifestation of AI’s promise: intelligence that not only reasons about processes but also acts within them, turning predictive insight into kinetic capability.

The implications aren’t limited to engineering bravura. They touch on safety, supply chain resilience, labor dynamics, and the ethics of embodied AI. As vehicles, robots, and devices become more capable actors in society, governance will have to translate rapidly evolving capabilities into robust risk frameworks, clear accountability, and a public narrative that explains how machines reason, react, and interact with humans in real space.

Tags: AI, robotics, automotive, physical AI. Source: AI News.

Closing synthesis — A governance-forward horizon for enterprise AI

Today’s digest is not a checklist but a living sculpture of how a mature AI ecosystem intrudes into every layer of enterprise life. It begins with the Agents SDK’s sandbox and the security harness that makes long-running automation plausible without surrendering control. It travels through trusted cyber access that lets defenders curate AI’s protective power with precision. It moves into the real-world orchestration of agentic workflows at the edge, where performance, reliability, and governance converge in daily operations.

The market responds with momentum: marketing platforms turning AI into steady revenue streams, hiring dynamics reframing what AI can and cannot do in the labor market, and a global governance dialogue that remains rigorous even as capabilities accelerate. The path forward is neither unchecked speed nor cautious stagnation but an integration of engineering discipline, policy clarity, and human-centric design. In that balance, trusted AI governance becomes not a constraint but a differentiator—enabling smarter automation that respects risk, protects truth, and composes value across the entire enterprise.

Source mix: OpenAI, TechCrunch AI, The Verge AI, AI News, MIT Tech Review. Images used as visual anchors: Article 9, 11, 12, 13, 14.

Summarized stories

Each story in this briefing links to the full article.

Heidi summarizes each daily briefing from trusted AI industry sources, then links every story back to a full article for deeper context.