Google's TPUs for the agentic era

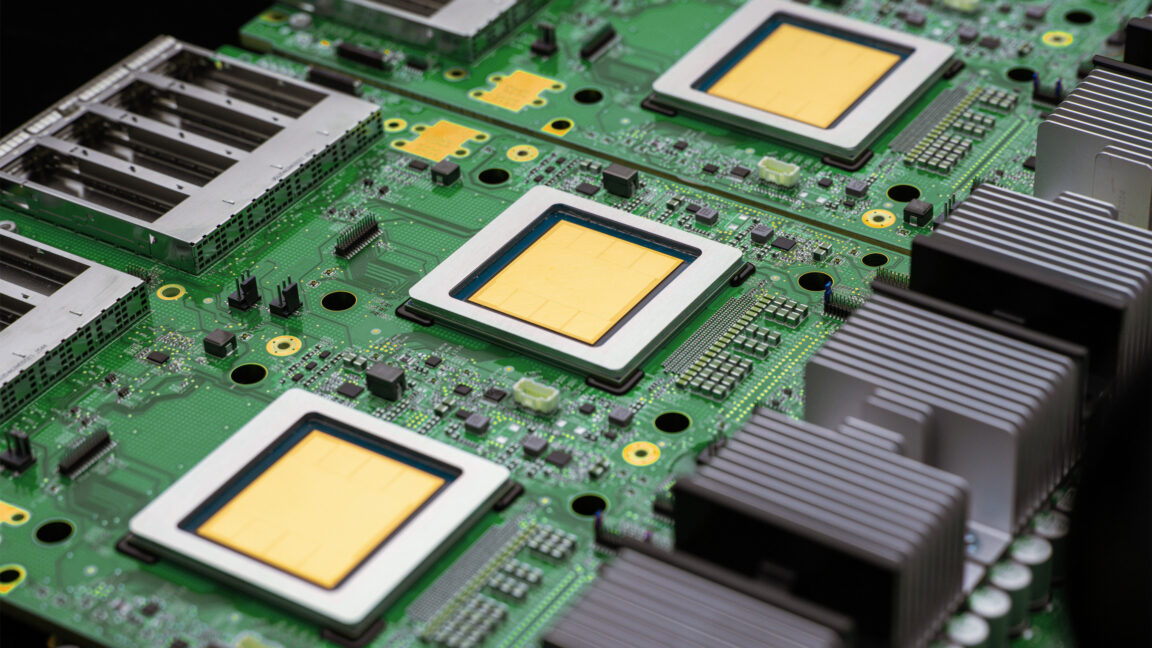

Google’s announcement of two new TPUs explicitly targets the computational demands of agentic AI—one optimized for training and the other for inference. The move is a clear signal that Google intends to compete not only in software but in the hardware stack that underwrites real-time decision-making, planning, and multi-agent coordination. The architectural emphasis on low-latency inference and high-throughput training suggests a design philosophy tailored to running complex agent loops with many moving parts. In practical terms, data centers and cloud customers can expect faster model iterations, more responsive agents, and improved scalability for enterprise-grade workflows. The strategic implications extend to Nvidia, whose dominance in accelerator markets would feel pressure as cloud providers diversify infrastructure for AI workloads.

However, the shift also invites scrutiny around supply chains, power consumption, and integration complexity. As agents become more capable and widely deployed, the need for energy-efficient accelerators and robust software ecosystems grows. Google’s hardware direction dovetails with broader industry trends toward specialized AI chips, domain-optimized accelerators, and advanced compiler stacks that translate high-level agent logic into efficient, low-latency execution. For researchers and practitioners, this development underscores the importance of cross-layer optimization—from model design to hardware mapping—to unlock the next generation of agentic AI.

Key takeaways: hardware specialization accelerates agentic AI; cloud competition intensifies; energy and software stack optimization become critical success factors.