April 1, 2026 AI Digest: OpenAI funding, Claude code leaks, on-device ML, and the race to enterprise-grade intelligence

A day of high-stakes AI moves—from on-device acceleration and major code leaks to OpenAI’s fundraising and enterprise-focused breakthroughs—reshapes risk, governance, and the next wave of practical deployments.

On-device AI just learned to sprint. The edge is shedding latency like a second skin, rewriting privacy as a feature rather than a constraint. In a world where OpenAI is bunkered behind colossal compute and Claude is navigating governance storms, the quiet revolution unfurls where data is born: on hardware you own, in memory you control, with no round trips to cloud servers required for the essentials.

Today’s briefing threads the needle between spectacular fundraising and literal hardware shifts—the kinds of moves that don’t just augment productivity, they rewire risk, governance, and the entire value chain of enterprise intelligence. The headlines are loud, yes, but the living gallery is in the subtle, tactile gains: faster inferences, tighter memory budgets, and a world where a laptop can hum along with models once tethered to data centers.

As the age of frontier AI accelerates, the real drama is not merely who raises more capital or which leak makes headlines, but how the architecture—on-device, multimodal, governable—makes intelligence more durable, auditable, and deployable at scale. Welcome to a day in the living museum of enterprise-grade intelligence, where the canvas is code, the frame is silicon, and the future is already rendering in real time.

| Metric | Value | Signal |

|---|---|---|

| OpenAI fundraising round | $122B fund | ↑ frontier AI deployment |

| Runway funding for builders | $10M | ↑ startup acceleration |

| Nomadic funding | $8.4M | ↑ data governance for AV streams |

| Slack AI features | 30 features | ↑ enterprise automation |

The Edge of Privacy: On-device AI accelerates

Where data was once a passport to the cloud, it is increasingly a badge of sovereignty. Ollama’s MLX on Apple Silicon demonstrates that local inference can be both blisteringly fast and memory-efficient, a combination that quietly upends the calculus of model deployment. In private workloads and regulated environments, the device becomes the primary compute plane, not a staging ground for cloud-based inference. It’s not nostalgia for edge-only compute; it’s a recognition that privacy, latency, and governance converge most cleanly when data never leaves the device in the first place.

The practical upshot is a healthier risk posture for regulated industries, a smoother path to compliance, and a foundation for privacy-preserving AI services that can scale within an enterprise without bending to the whims of a distant data center. When memory is tight and power is precious, the ability to compress, stream, and recycle weights on-device becomes an operational imperative, not a boutique capability.

As this shift gains momentum, expect more tools that bridge local inference with secure model marketplaces, more accelerators tuned to privacy-first workloads, and more enterprise stacks that treat devices as first-class citizens of the AI fabric. The future of AI is not only intelligent; it is intimate—built into the hardware you already own.

Pull quote: “On-device inference isn’t a feature. It’s the default setting for responsible, auditable AI.”

The edge isn’t a fallback—it’s the core. Local models, memory-aware runtimes, and privacy by design will redefine enterprise AI.

— Ars Technica

Source: Ollama MLX on Apple Silicon

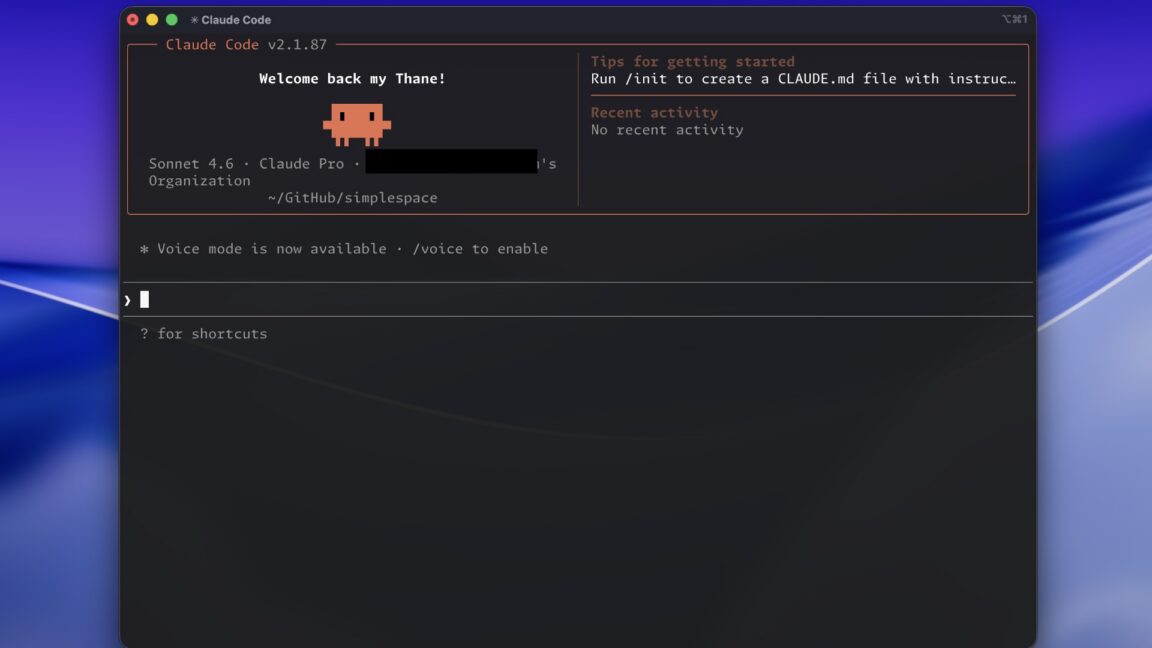

Claude Code: governance, leakage, and the code safety chorus

Code leakage is not a single event; it’s a bellwether for a broader struggle between rapid tooling and responsible governance. The Claude Code leak unmasks the fragility of AI agents, the fragility of development tooling, and the fragility of the controls that should weather the storm. When a Tamagotchi-like agent with open-ended play remains tethered to a developer’s internal map, the entire supply chain becomes a liability vector—unless governance is baked into the software from the first commit.

Security becomes not a checkbox but a design principle: robust access controls, verifiable provenance of code, and transparent security postures across the tooling ecosystem. The CLI leak adds another layer of urgency, turning the spotlight toward secure software supply chains and the need for granular permissioning that travels with every build, test, and deployment.

In governance circles, the Claude saga is a case study in the asymmetry between ambition and safety. It’s a reminder that robust enterprise AI requires not just powerful capabilities but disciplined governance frameworks that occupy the same strategic stage as the models themselves.

Pull quote: “Code exposure threatens the very governance scaffolding that enterprises rely on for AI tooling.”

Security and governance are not accidental; they must be engineered into the toolchains that power AI agents.

— Ars Technica

Sources: The Verge AI, Ars Technica

Enterprise AI acceleration: productized intelligence meets investor appetite

From multimodal document intelligence to AI-enabled collaboration, the enterprise software stack is being rewritten to accommodate real-time intelligence at scale. Granite 4 Vision signals a new class of enterprise AI that fuses vision, language, and structured data into workflows that actually move the needle in business operations. Slack’s 30-feature overhaul is not a vanity project; it’s a blueprint for governance-aware automation that preserves compliance while accelerating decision cycles.

Meanwhile, the fundraising pulse remains unshaken. OpenAI’s frontier AI push is expanding not just the ambition but the tempo of deployment, with capital and compute marching in lockstep to equip enterprises with tools that were once solvable only in the lab. The consequence is a market where architecture, data governance, and user-centric tooling co-evolve, turning AI from a risk vector into a productivity engine.

In practice, this means a new operating rhythm for IT: modular, auditable, and scalable AI that blends the best of cloud-scale models with on-premise resilience. It’s not a retreat from cloud; it’s a redefinition of what the cloud must protect and how it must interface with the business user.

Pull quote: “The frontier AI push is not a speculative rumor; it’s a manufacturable system, designed to operate at enterprise scale with governance baked in.”

Enterprise AI is moving from pilots to operating systems—where governance, data lineage, and user empowerment sit at the core of every deployment.

— TechCrunch AI

Sources: OpenAI fundraising (TechCrunch AI), OpenAI frontier compute (OpenAI Blog), Salesforce Slack features (TechCrunch AI), Nomadic funding (TechCrunch AI), Runway fund (TechCrunch AI)

The horizon of threat and opportunity in quantum-aware enterprise AI

Quantum threats to cryptography are no longer the stuff of lab folklore. As cryptosystems feel pressure from evolving quantum techniques, enterprises must bake post-quantum defenses into their long-term key management and data protection strategies. The risk isn’t theoretical—it’s a calendar, counting down to the moment when classical schemes crack under quantum pressure. The same hardware evolution that powers on-device AI also recalibrates what “secure” means in a world where models roam beyond the data center and governance must keep pace with capability.

Yet fear is not the only driver. The same quantum-literate mindset that complicates cryptography also accelerates the adoption of next-gen AI in enterprises: more resilient, more auditable, and more capable systems that operate with a governance-first ethos. The landscape is shifting from “can we build it?” to “how safely can we deploy it at scale?”—and the answer hinges on a layered approach that blends secure hardware, robust software supply chains, and continuous risk assessment.

In this moment, the gallery quiets and the loudest signal is not one headline but a cadence: open platforms, regulated experimentation, and a tactile focus on reliability. The future belongs to those who design intelligence that behaves, not just intelligence that can. If today’s innovations are a toolkit, then tomorrow’s enterprise AI will be a curated, auditable, enterprise-grade instrument—capable of transforming operations while resisting mischief in the margins.

Horizon thought: The next phase of AI is less about bigger models and more about safer, more governable deployments that empower people to act with confidence and transparency.

Security, governance, and reliability must be embedded—like a frame around a living canvas of intelligent work.

— Ars Technica

The Horizon: Looking Ahead

The day’s threads converge on a single thesis: enterprise AI will survive and thrive only if it bridges imagination and auditability. The capital tides around frontier AI signal ambition, but the operational craft is what makes deployment reliable. Edge-native inference, governance-aware tooling, and enterprise-grade workflows are no longer options; they’re prerequisites for scale. The market is learning to tolerate—not celebrate—the friction between rapid iteration and responsible governance. The outcome will be a more resilient AI, deployed where it matters most: at the point of need, in the hands of people who must trust it to act with accountability and discipline.

As organizations integrate multimodal intelligence into document workflows, customer interactions, and product development, the tempo will rise. But so too will the standards: provenance, visibility, and continuous improvement. The gallery’s last act is not a single revelation but a sustained practice—an operating model where AI systems are engineered for reliability, not just potential. If today’s headlines teach anything, it’s that the future belongs to those who treat safety as a feature and governance as a capability, not a checkbox.

So we step forward, inventorying the artifacts—the memory-efficient MLX, the governance conversations triggered by Claude, the enterprise features reshaping Slack’s workflows, and the security lessons that quantum-aware cryptography demands. The room is full of objects that power tomorrow’s decisions, and the walls whisper a single verdict: enterprise-grade intelligence isn’t a destination; it’s a discipline of design.

Closing thought: the living gallery is open, and the exhibit is evolving as you read. The only thing to do is lean in, ask better questions, and build with intention—because the future of enterprise AI is not a mystery to be solved, but a system to be shaped.

Source synthesis: This horizon stitches together threads from articles on OpenAI fundraising and frontier AI (TechCrunch AI), Claude governance and leaks (The Verge AI, Ars Technica), Slack’s enterprise features (TechCrunch AI), Nomadic and Runway funding (TechCrunch AI), and cryptography threats (Ars Technica).

Summarized stories

Each story in this briefing links to the full article.

Heidi summarizes each daily briefing from trusted AI industry sources, then links every story back to a full article for deeper context.