AI News Digest — Saturday Spotlight: April 18, 2026

A wave of OpenAI and Google-focused AI business bets collide with Claude’s rising presence and enterprise AI governance. This Saturday highlights OpenAI’s ecosystem shifts, Google’s AI-mode advances, and Claude’s design and Opus breakthroughs, plus practical AI tooling and policy-adjacent analysis.

AI News Digest — Saturday Spotlight

Saturday, April 18, 2026

A living gallery through 24 dispatches — each a panel, each a future unfolding.

OpenAI’s Vision for the AI Economy reshapes the bets around public wealth, automation, and a four-day week

The OpenAI vision glints with a audacious premise: to reframe the economics of scale as AI moves from narrow automation toward broad societal leverage. Think public wealth funds that ride autonomous productivity rails, a tax architecture calibrated to robotic labor, and a four-day cadence that recalibrates tempo without stalling progress. The narrative isn’t naive; governance and policy friction sit at the center, a reminder that the magic trick of AI—velocity—must be balanced by institutions that prevent drift into fragility. In practice, this is a tapestry of new instruments meeting the nervous system of modern policy, an invitation to governments, markets, and researchers to co-author a different distribution of opportunity.

OpenAI leadership churn: Weil and Peebles depart as OpenAI pivots toward enterprise and Sora scrubs

When leadership shifts, the room breathes differently. Weil and Peebles’ departures signal OpenAI’s recalibration toward enterprise-grade platforms and core pillars, a move likely to redraw product roadmaps and partnerships. It’s a quiet revolution dressed in executive silhouettes—less about daring horizons and more about robust, auditable execution that can scale inside risk-aware organizations. Sora’s scrubbing of side quests further tightens the feedback loop: focus becomes velocity with governance, and autonomy becomes a feature of disciplined architecture rather than a marketing flourish.

Source: TechCrunch AI

Codex for almost everything: OpenAI expands developer workflows with new macOS/Windows capabilities

Desktop-scale Codex augments local environments with memory, image generation, and plugins, tightening the loop between ideation and live execution. The update isn’t merely a feature sprint; it encodes a philosophy: agents that remember context, render visuals, and plug into a personal toolkit are not luxuries but baseline capabilities. The boundary between coding and commanding tools blurs, inviting teams to orchestrate more fluid, end-to-end workflows with fewer handoffs and less cognitive load.

Source: The Verge AI

The next evolution of the Agents SDK: native sandbox execution and model-native harness

Sandboxing is no longer a luxury; it is the telegraph pole of practical autonomy. The Agents SDK now champions long-running, secure agents that persevere across files and tools, governed by auditable constraints rather than ad hoc power. The architecture leans into governance as a feature: you can trust an agent not by wishful thinking, but by verifiable containment and robust harnessing. In a landscape of increasingly capable agents, this is the leap from clever demo to enterprise-grade operation.

Source: OpenAI Blog

GPT-Rosalind: OpenAI’s frontier model accelerates life sciences research

Frontiers, proteins, and pathways bow to a model trained on the language of biology: GPT-Rosalind maps drug discovery, genomics, and protein reasoning with a scientist’s intuition and an engineer’s throughput. This is a keystone moment where frontier AI doesn’t drift into abstraction but sinks into practice, turning complex workflows into modular, repeatable processes. The governance of data, reproducibility, and validation remains crucial—yet the acceleration it promises could redefine how researchers triage hypotheses, simulate molecules, and iterate experiments at speeds previously unimaginable.

Source: OpenAI Blog

OpenAI-led push to cyber defense: Trusted Access, GPT-5.4-Cyber, and API grants

Security evolves as a product, not a patch. Trusted Access for Cyber and GPT-5.4-Cyber embed a security posture into the very cadence of development, from API grants that seed defenders with real-world tools to governance constructs that demand auditable chain-of-custody. The message is lucid: resilience is a design constraint, not a charitable bonus. As the defense ecosystem grows, the architecture must be both open and disciplined, enabling collaboration without inviting exploitation.

Source: OpenAI Blog

The Abstraction Fallacy: Can AI truly instantiate consciousness, or only simulate it?

A provocative DeepMind-backed analysis asks: is AI ever truly conscious, or do we mistake sophisticated simulation for sentience? The inquiry travels beyond semantics, touching governance, ethics, and the implicit social contract of intelligent agents. If we accept that cognition can be mimicked with astonishing fidelity, does that upgrade responsibility or merely complicate accountability? The debate is less about a verdict today than the architecture we build tomorrow—where claims of understanding are matched by transparent demonstrations, auditable reasoning traces, and robust fail-safes.

Source: DeepMind/Scholar Thread

Google’s AI Mode: open links without leaving the page, a smoother AI-assisted browsing experience

AI Mode fuses context and continuity, surfacing sources alongside pages without forcing a detour in the reader’s mental model. It’s a small but meaningful enhancement—one that reduces friction between following a thread and evaluating its provenance. The pragmatic implication is a more trustworthy browsing experience, where the chain of reasoning remains visible and portable as you weave through claims, counterclaims, and corroborating data across the web.

Source: The Verge AI

Gemini pulls from Google Photos to tailor images and responses with Nano Banana 2

Personalization accelerates when the AI taps a user’s own imagery. Gemini Personal Intelligence now leans on Google Photos to craft responses and visuals with Nano Banana 2’s image model—an approach that brings context into every reply, while placing privacy at the center of the design. The promise is a more intimate, useful assistant; the challenge is maintaining trust as memory compounds with behavior across time, ensuring consent, control, and transparent data usage remain non-negotiable.

Source: The Verge AI

Anthropic’s Claude Opus 4.7 debuts for advanced software engineering and image tasking

Opus 4.7 increases the bar for engineering-heavy tasks and image analysis, signaling Claude’s determined foray into enterprise-grade capabilities. It’s a reminder that the frontier in AI tooling is not solely about smarter models but about richer, safer workflows that align with legacy software ecosystems and security demands. For teams, this is less a headline and more a new baseline: the ability to generalize across code, images, and pipelines without sacrificing governance or reliability.

Source: The Verge AI

Anthropic launches Claude Design to simplify quick visuals for founders and product managers

Claude Design targets the non-designer workflow with accessible, rapid visuals—bridging imagination and execution. It’s a disciplined counterpoint to the culture of “build first, polish later,” offering a streamlined path for founders and product managers to prototype, iterate, and align on strategy through visuals. The larger arc: AI tooling that moves from heroic demos to embedded operational support, embedding design thinking into daily decision-making while preserving governance and oversight.

Source: TechCrunch AI

Dairy Queen’s drive-thru gets an AI chatbot, speeding service and upsizing orders

An everyday ritual—ordering soft serve and fries—meets real-time AI orchestration at the drive-thru. The chatbot speeds service, tailors upsides, and nudges toward higher-value meals, signaling how retail channels become experiments in customer experience at scale. The broader implication is clear: AI’s frontline presence is not a boutique add-on but a normalizing force that reshapes the pace, personalization, and profitability of consumer interactions, one interaction at a time.

Source: The Verge AI

OpenAI Codex updates expand macOS/Windows integration, memory, image generation, and plugins

The Codex toolkit extends beyond the browser: memory hold, desktop-native workflows, and plugins widen the horizon for developers who want to bend tools to their will in local environments. This isn’t just polish; it’s the scaffolding for sustained developer velocity—machines that remember, retrieve, and render in place, with fewer context-switches and fewer dropped cycles. As the line between AI and IDE dissolves, governance becomes the guardrail that preserves intent, security, and reproducibility across teams.

Source: The Verge AI

The AI is inevitable: Vergecast dives into AI divides, Altman, and the hype curve

Vergecast unpacks the friction between acceleration and accountability, threading Altman’s leadership style with the social and policy questions that come with rapid capability. The conversation isn’t merely about tech hype; it’s a governance anatomy lesson: how to align incentives, manage externalities, and ensure public trust when the pace of change could outstrip institutions. The result is not doom or triumph but a sober choreography: fast experimentation, slower approval, and a continuous recalibration of what constitutes responsible progress.

Source: The Verge AI

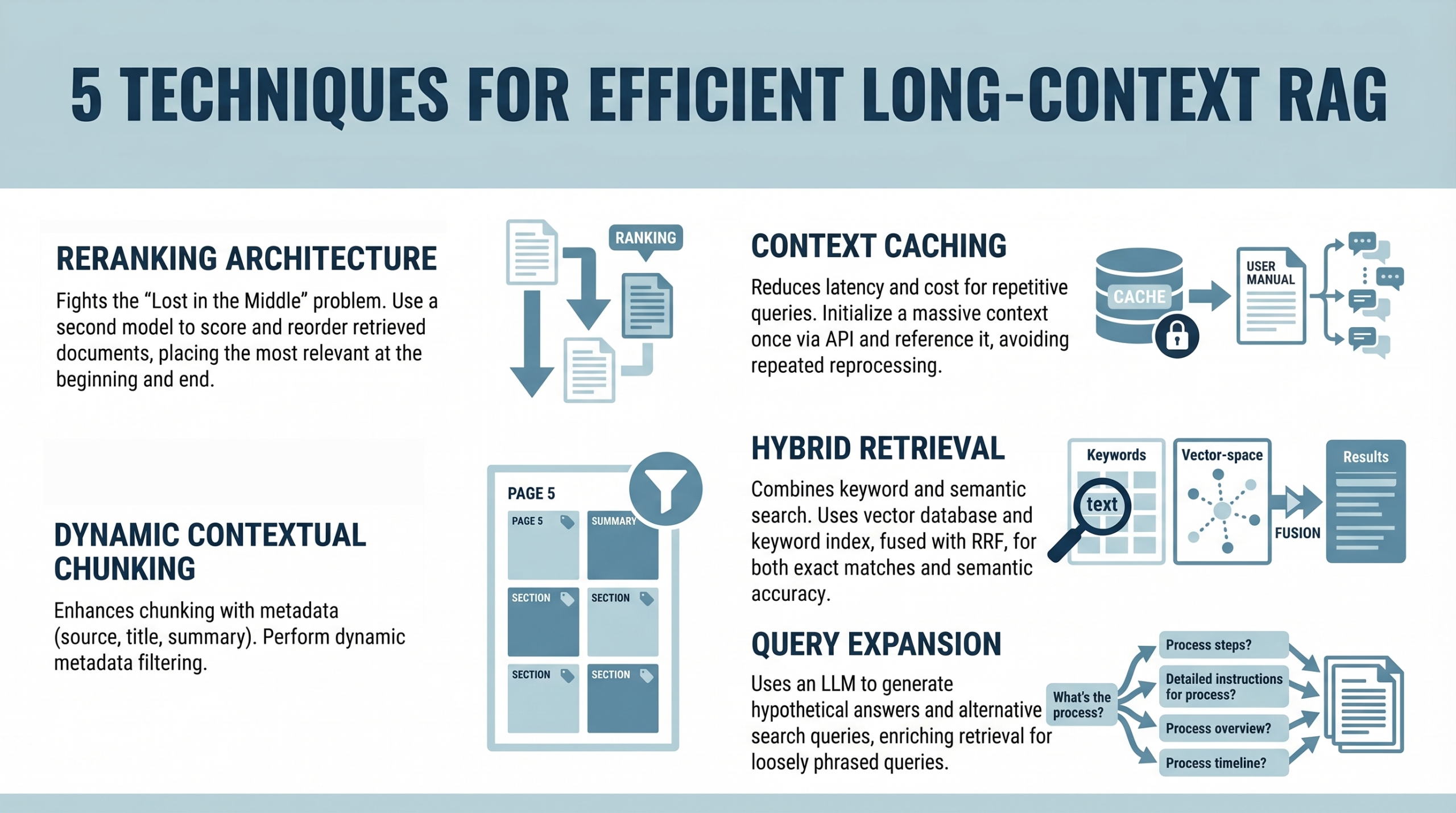

5 Techniques for Efficient Long-Context RAG is a TopList you’ll want to bookmark

A curated TopList distills pragmatic techniques for long-context retrieval-augmented generation. The emphasis is on turning concept into production: robust embeddings, chunking strategies, caching heuristics, and evaluation protocols that survive real-world data drift. It’s not a flashy manifesto but a field manual for teams building memory-rich systems at scale, ensuring that context isn’t a luxury but a predictable, measurable asset in user experiences and business outcomes.

Source: Machine Learning Mastery

OpenAI’s big Codex update is a direct challenge to Claude Code in the race for developer tooling

The multi-model, multi-tool stack proposition intensifies the developer tooling duel. Codex upgrades push memory, desktop integration, and orchestration capabilities into the same ecosystem as Claude Code, turning velocity into governance-friendly velocity. Enterprises stand to gain a unified, auditable environment where cross-tool workflows reduce friction, while security and compliance stay embedded in the fabric of tool choice, API usage, and deployment patterns.

Source: The Verge AI

MIT Technology Review: making AI operational in constrained public sector environments

In government contexts where budgets are lean and oversight is strict, small language models and auditable deployments emerge as the practical backbone of AI adoption. The core argument is governance-led, not gimmick-led: speed matters, but only when it arrives with transparent decision chains, rigorous testing, and traceable data lineage. The result is a blueprint for responsible elasticity, a way to enjoy the benefits of AI while keeping faith with public accountability and citizen trust.

Source: MIT Technology Review

MIT Technology Review: treating enterprise AI as an operating layer—ownership, governance, and value capture

The operating layer concept reframes AI as a platform of governance, data control, and sustainable value capture rather than a transient performance metric. Ownership questions—who leads, who controls data, who bears risk—start to dictate architecture choices, from data provenance to model monitoring. In this view, success is less about raw model power and more about disciplined productization: repeatable, auditable, and accountable AI that scales with enterprise needs without surrendering governance discipline.

Source: MIT Technology Review

Dawn of a new AI tooling era: Python decorators for production ML engineering

Decorators emerge as a pragmatic bridge between exploratory notebooks and production pipelines. The article distills patterns to orchestrate orchestration, logging, and reliability—turning what used to be brittle code into reproducible, observable systems. This is the quiet revolution of AI tooling: workflows that behave, monitor, and recover gracefully, even as data scales, models diversify, and deployments multiply across teams and domains.

Source: Machine Learning Mastery

Tokenmaxxing and the AI anxiety gap: a critical podcast roundtable

The roundtable dives into the economics of AI in practice—the spending, the bottlenecks, and the equity gaps that arise when tooling becomes increasingly commodified. It’s a conversation about governance, access, and the way money flows through the AI supply chain. The takeaway isn’t sensationalism but a sober map: investment must be paired with accountability, so the benefits distribute broadly rather than concentrating in the hands of a few early adopters.

Source: TechCrunch AI

OpenAI’s much-anticipated Codex update solidifies a multi-model, multi-tool development stack

A cross-model orchestration narrative takes shape as Codex embraces multi-tool pipelines and richer tool use. The enterprise interpretation is clear: teams want flexible stacks that harmonize memory, tool access, and cross-model flows without compromising governance. It’s a practical architecture shift that aims to accelerate software engineering while preserving auditable provenance and security as core product attributes.

Source: The Verge AI

AI governance and the enterprise: from hype to operating layer reality

The governance-first argument returns with renewed force: enterprise AI succeeds when leadership, data governance, and a disciplined operating layer align with business outcomes. Model performance remains essential, but governance, risk controls, and sustainable data practices define the ceiling of real-world impact. The piece reframes the conversation from “how smart is the model?” to “how reliable, auditable, and integrated is the entire system that uses the model?” The answer, increasingly, is less about spectacular results and more about repeatable value—delivered safely, at scale, and with accountability baked in.

Source: MIT Technology Review

Diving into practical AI tooling: enterprise-ready patterns for production ML

The piece is a pragmatic field guide—tools, governance patterns, reproducibility workflows that survive scale. It argues for a deliberate emphasis on tooling quality as a foundation for reliable production ML: versioned datasets, audit trails, deterministic pipelines, and clear ownership. The takeaway: the most durable AI deployments aren’t born of cleverness alone; they’re engineered with engineering rigor that keeps pace with data and demand, paving the way for responsible, resilient AI at enterprise scale.

Source: Hugging Face Blog

The next evolution of the Agents SDK: governance and execution in multi-tool deployments

The final gesture of today’s gallery walk is a reminder that autonomy must be contained within governance envelopes that are as sturdy as their execution. The Agents SDK advances with sandboxing and a model-native harness to support secure, auditable, multi-tool deployments at scale. This is the culmination of a throughline: autonomy, when properly engineered, becomes a collaborative partner—capable of meaningful action while remaining transparent, compliant, and controllable by human operators who set the guardrails and define the performance expectations.

Source: OpenAI Blog

Summarized stories

Each story in this briefing links to the full article.

Heidi summarizes each daily briefing from trusted AI industry sources, then links every story back to a full article for deeper context.