April 23, 2026 AI News Digest — Thursday

A new wave of enterprise AI tooling, chip/Linux-level acceleration, and governance debates shape today’s AI landscape. From OpenAI’s workspace agents to Google’s agentic chips, the day blends breakthroughs with policy and privacy considerations.

Digest headline: AI News Digest — Thursday

A living gallery of the agentic era: enterprise automation, hardware arms races, governance, and the political economy of data. Each panel a portal, each headline a cue to rethink work, privacy, and the boundaries of intelligent agents.

OpenAI now lets teams make custom bots that can do work on their own

In a marked shift toward autonomous enterprise tooling, OpenAI expands its workspace agents to empower teams to deploy bots that execute business tasks with minimal human prompting. The change signals not just a feature puff but a structural move: automation scales from copilots to fully autonomous operators that can monitor, decide, and act across a company’s digital tools. The promise is speed, resilience, and a new layer of orchestration—one where a ticketing bot can triage, route, and resolve issues without daily handoffs. The risk spectrum widens as well: governance, audit trails, and guardrails become not an afterthought but a core design principle. As enterprises deploy these capabilities, the line between automation and governance thickens, inviting CIOs to choreograph policy with product velocity.

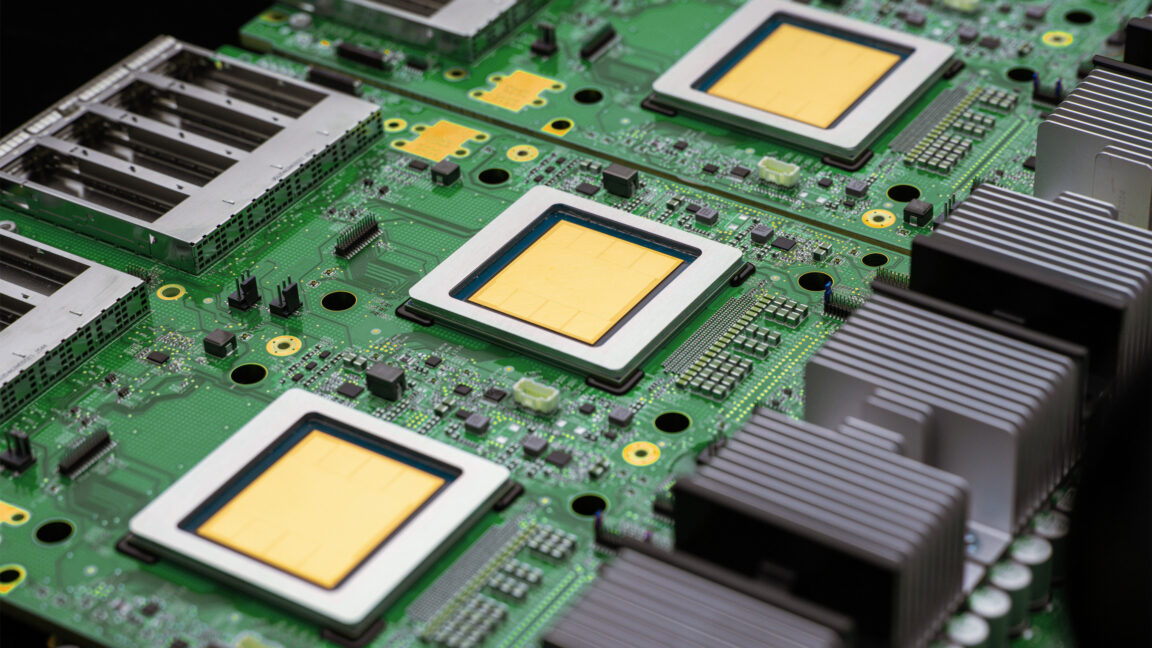

Google unveils two new TPUs designed for the agentic era

Google’s hardware push reaches into the software realm: two new Tensor Processing Units are engineered to accelerate agent-based workloads, a strategic bet that computation itself should be a first-class, policy-aware partner in autonomous systems. The architectural bet emphasizes latency-conscious inference and scalable orchestration for multi-agent workflows, hinting at a future where the chip level is as narrative as the API. Expect tighter coupling with Gemini-derived tooling and a hardware-software handshake that aims to shrink the loop between demand signals and autonomous action. As chipmakers recalibrate, the agentic era becomes as much hardware-validated as software-driven.

Google Meet will take AI notes for in-person meetings too

The AI notes revolution spills from virtual rooms into conference rooms. Google is extending transcript-driven intelligence to in-person sessions, uniting Meet, Gmail, and Gemini-based assistants into a single thread of context. The impact is not merely convenience; it’s a rewrite of follow-ups, decisions, and accountability. A smarter meeting implies a smarter work ritual—one that auto-synthesizes action items, assigns owners, and harmonizes cross-tool workflows with the kind of precision previously reserved for post-it notes and email threads. It’s a quiet but consequential nudge toward a more continuous, audit-ready collaboration scaffold.

Google Cloud launches two new AI chips to compete with Nvidia

In a cloud hardware arms race, Google Cloud unveils rival chips designed to sprint past the incumbents in transformers, inference, and real-time orchestration. The move tightens the circle around enterprise AI acceleration, signaling that hardware is no longer a footnote but a strategic lever in the autonomy playbook. If software can orchestrate a chorus, hardware now conducts the orchestra—reducing latency, sharpening parallelism, and enabling more aggressive multi-agent deployments. The result could be a more democratized, cloud-native path to agentic workloads, but it also deepens the thicket of vendor lock-in and supply chain risk that enterprise buyers are already grappling with.

Now Meta will track what employees do on their computers to train its AI agents

Meta’s Model Capability Initiative dives into a controversial utility: instrumenting employee activity to sharpen AI agents. The promise is a more responsive, context-aware motif for enterprise copilots—agents that understand how work actually unfolds by watching the patterns of usage, tool interactions, and workflow choreography. But the instrumentation comes with a price tag: privacy friction, governance ambiguity, and the risk of surveillance-heavy cultures eroding trust. The enterprise is at a crossroad where the value of data-fueled agents must be weighed against the human factors of autonomy, consent, and organizational accountability. If designed with transparency and governance by design, it could unlock a new tier of agent capability without surrendering employee dignity.

Making ChatGPT better for clinicians

OpenAI’s clinician-focused enhancements tilt ChatGPT from a general-purpose assistant toward a trusted healthcare companion. The aim is to reduce documentation burden, streamline clinical workflows, and accelerate research by giving verified U.S. clinicians access to specialized copilots wired to patient records, coding standards, and evidence-based guidelines. The shift anticipates regulatory nuance—data provenance, privacy, and risk scoring will be in the foreground as doctors lean on AI for triage, note-taking, and decision support. The healthcare AI frontier remains delicate: success will hinge on guaranteeing auditability, safeguarding patient privacy, and maintaining clinician agency in the face of algorithmic influence.

Introducing workspace agents in ChatGPT

The desk-to-doorstep upgrade for collaboration: ChatGPT gains workspace agents designed to automate intricate workflows across tools, enabling scalable team automation. The architecture promises to weave disparate tools into coherent, auditable processes, turning human-led orchestration into distributed, consented automation. This is more than a feature—it's a philosophy shift: from one-off copilots to interoperable agents that can interpret intent, sequence tasks, and propagate decisions across toolchains without constant prompting. The risk, as ever, is governance: who owns the provenance, who audits the decisions, and where do we draw the ethical lines in automated work?

Speeding up agentic workflows with WebSockets in the Responses API

The latency race in agent loops just got a boost. A deep dive into Codex agent loops reveals how WebSockets, coupled with strategic caching, trims the overhead of multi-agent orchestration. The performance edge matters not merely for speed but for reliability: when your agents must converse, negotiate, and execute in real time, the time saved can translate into tangible business impact—from faster decision cycles to more robust error recovery. The narrative here is practical: architecture as a moat, where every millisecond saved compounds into a smoother, more resilient automation fabric.

Workspace agents

OpenAI’s continued blog-lit exploration of scalable automation across teams positions workspace agents as the connective tissue of modern workflows. The narrative is less about a single application and more about a design ethos: agents that understand a team’s toolset, context, and governance constraints well enough to orchestrate cross-tool sequences. For leaders, the takeaway is a blueprint: design pipelines where agents are both capable operators and auditable actors, with policies baked into their autonomy.

OpenAI Privacy Filter

A privacy filter that detects and redacts PII in text embodies a principled turn toward privacy-preserving AI. OpenAI frames this as governance-first design: data minimization, provenance clarity, and decoupled processing to minimize exposure without sacrificing utility. The principle matters: as AI becomes more woven into enterprise workflows, privacy controls must be as integral as performance benchmarks. The policy core—how we balance usefulness with protective scrims—will shape adoption trajectories and regulatory conversations alike.

Anthropic’s Mythos dangerous AI model just fell into the wrong hands

Mythos, a cybersecurity-focused model from Anthropic, has reportedly been accessed by unauthorized users, underscoring the fragility of access control in a world of shared tools and cloud-hosted models. The incident spotlights the governance gaps that threaten to unravel even the best-intentioned safety layers. It’s not merely a breach tally; it’s a test of policy, credential hygiene, and the human factors that govern access in AI ecosystems. The episode reframes risk: a model is only as safe as its access surface, and trust in the architecture hinges on transparent incident response, rigorous auditing, and layered, evolving defenses.

AI needs a strong data fabric to deliver business value

MIT Tech Review argues that without a robust data fabric, AI’s value remains capped, especially across enterprise silos. A well-orchestrated fabric—governed, interoperable, privacy-aware—turns raw data into a shared, trustworthy resource for copilots, analytics, and decision-support systems. The piece reframes data strategy as a competitive necessity: you don’t just buy models; you curate a resilient data backbone that preserves provenance, quality, and consent. The governance layer is not a constraint but a differentiator, enabling faster experimentation, safer deployment, and stronger regulatory alignment as AI becomes an endemic capability across functions.

Agent orchestration

MIT Tech Review argues that AI agents must do more than talk back; true impact comes when orchestration emerges from multi-agent workflows. The essay maps a future where agents coordinate, negotiate, and hand off tasks with a governance scaffold that prevents derailment. It’s a reminder that the real power isn’t a slick interface or a single clever model—it’s a robust choreography of autonomous actors, each acting within boundaries, each accountable to a shared ledger. The governance question becomes a design constraint rather than a compliance burden, steering teams toward composable, auditable systems that scale responsibly.

10 Things That Matter in AI Right Now

MIT Tech Review offers a curated sunburst of AI trends, from agent orchestration to open-source shifts, painting a landscape where governance, data fabric, and policy collide with rapid experimentation. The list isn’t a static snapshot; it’s a perceptual map for executives and engineers navigating a terrain where every trend seeds new capabilities and new constraints. The message is dual: invest in architecture that sustains velocity, and fortify governance that preserves trust as AI becomes a core engine of decision-making across function and geography.

AI backlash is coming for elections

As political races intensify, policy debates and data-center footprints shape the political economy of AI infrastructure. The piece maps a coming countercurrent: concerns about misinformation, data sovereignty, and the resilience of critical infrastructure against manipulation. It’s a reminder that the AI era will be judged not only by breakthroughs but by how governance, procurement, and public accountability adapt to a landscape where digital influence becomes a strategic battleground. The practical question remains: what safeguards, transparency, and incentives can align accelerated AI deployment with the political and ethical fabric of a democratic process?

OpenAI’s updated image generator can now pull information from the web

Image Generator 2.0 steps onto the canvas with web access, enabling richer context and provenance-aware image synthesis. This is not merely a feature tweak; it represents a recalibration of context as a first-class input. Web-sourced prompts can now be anchored to live data, while governance and provenance trails become integral to generation, auditing, and licensing. The outcome is a more powerful, information-rich creative tool—one that invites new workflows for marketing, journalism, and design—but also intensifies the responsibilities around data provenance, copyright, and misinformation risk.

Summarized stories

Each story in this briefing links to the full article.

Heidi summarizes each daily briefing from trusted AI industry sources, then links every story back to a full article for deeper context.