AI at the Edge: GPT-5.5 momentum, governance pivots, and consumer AI shifts define May 5, 2026 — JMAC AI News Digest

A day of tectonic AI moves: OpenAI advances with GPT-5.5 while Apple, Google, and regulators recalibrate how models ship to users; edge compute expands; lawsuits and leadership overhauls headline governance and strategy.

May 5, 2026 • Volume I

AI at the Edge: GPT-5.5 momentum, governance pivots, and consumer AI shifts define May 5, 2026

A walking tour through a living digital gallery: the glow of GPT-5.5’s reliability, the quiet tremor of governance recalibrations, and the consumer-facing shifts shaping everyday AI use.

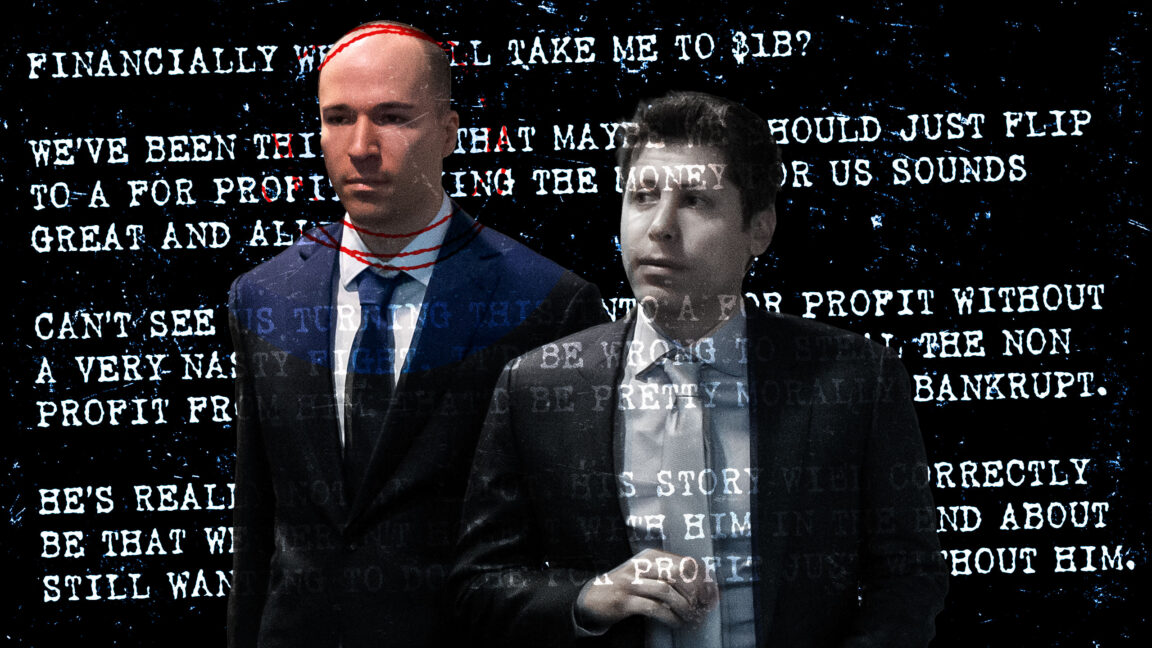

Enter the GalleryOpenAI diary case puts the spotlight on mission vs. markets

In a courtroom corridor where reputations flicker like neon in a rainstorm, diary entries from a central OpenAI figure become a mirror for a debate older than the industry itself: should AI labs chase market gravity or mission gravity? Ars Technica reports that juries are weighing what leadership diaries reveal about priorities, incentives, and the ethics of scale. The conversation moves beyond a single company toward the tension baked into every governance decision—how do you preserve the aspirational promise of AI while translating it into products, margins, and governance routines that satisfy investors, regulators, and users? The diary becomes an artifact of the era: a record of the friction between guardianship and growth, accountability and ambition.

Read on Ars TechnicaGoogle DeepMind workers unionize amid military AI deals

The newsroom tremor travels beyond policy briefs into the shop floor. DeepMind’s union vote is a symbol—when workers organize, governance becomes tactile, not abstract. The momentum mirrors broader industry debates about governance, societal impact, and the boundaries of military AI collaborations. The unionization wave offers a counterpoint to pure accelerator culture: it’s a reminder that innovation sits on a social contract, one that must be renegotiated as capabilities scale and deployments touch sensitive arenas. In this moment, governance is less about a three-letter acronym and more about how teams, budgets, and risk frameworks align with public values.

Read on WiredGoogle Home Gemini 3.1 upgrades deepen multi-tasking for smart homes

The living room becomes a laboratory of orchestration. Gemini 3.1 pushes multi-step tasks into the domestic future—chains of actions, anticipatory assistants, and a more fluent dialogue with devices. The update hints at a shift from voice-activated commands to agentic planning: your home negotiates sequences like “prepare morning routine, adjust lighting for focus, coordinate with lighting scenes, and place a shopping list order if groceries slip.” Privacy and transparency remain the quiet gravity centers: how much context does the home keeper need, and who owns the orchestration data? The promise is layer upon layer—an enhanced sense of agency for users, tempered by the careful boundaries that governance demands.

Read on The VergeSpan launches distributed AI data centers for edge compute

The edge becomes the stage for scale in the truest sense. Span’s distributed AI data centers tighten latency, offering responsive inference without saturating central campuses. The architecture promises resilience through geographic dispersion, while orchestration layers promise to keep workloads balanced, secure, and auditable. This is not merely hardware choreography; it’s a shift in where trust accrues—closer to users, closer to the data that fuels decisions, and closer to the regulatory and governance contours that demand transparency about data locality, provenance, and policy adherence.

Read Span coverageApple plans to let you choose AI models in iOS 27

A notable pivot in user governance: iOS 27 aims to empower users with a model chooser—a catalog of AI agents, each with disclosed capabilities, privacy posture, and safety guardrails. The choice is framed as a partnership with the user, not a gatekeeper’s decree. It’s a design language that invites accountability—every selection carries a tension between convenience and control. The move reframes “default” as a negotiated baseline rather than a one-size-fits-all choice, signaling a broader industry trend: consumers expect more granular governance of the AI personalities shaping their day-to-day decisions, money, and time.

Read on TechCrunch AIMicrosoft winds down Xbox Copilot; leadership reshapes the platform

When a flagship AI initiative migrates from a constant hum to a deliberate quiet, strategy follows. Xbox Copilot’s wind-down signals a broader rethinking of AI-augmented gaming—balancing immersive assistive features with the constraints of product roadmaps, regulatory scrutiny, and consumer expectations. Leadership reshuffles are rarely decorative; they recalibrate where engineering effort lands, how partnerships form, and what success looks like in a landscape where cloud-native copilots collide with bespoke, on-console experiences. The moment is less about end of a project and more about a pivot toward a more disciplined, edge-aware gaming future—one that blends performance with predictability, spectacle with stewardship.

Read on The VergeMusk vs Altman trial live updates: implications for OpenAI governance

The courtroom becomes a window into the high-stakes governance debate: IP ownership, control of AI invention, and the direction of a once-unified institution. The live updates render governance not as abstract policy but as a dynamic negotiation between power centers, risk appetite, and the public interest. The proceedings ripple beyond the courtroom walls, shaping investor sentiment, recruiting narratives, and the tempo of regulatory engagement. If the industry is a narrative, this trial is its dramatic hinge—where the answer to “who steers AI” could redefine who writes the rules, who bears responsibility for missteps, and how openness is balanced against competitive secrecy.

Read on The VergeGPT-5.5 Instant: system cards and new default model unleash lower hallucinations

OpenAI’s latest ripple in reliability arrives as a systemic shift: GPT-5.5 Instant supersedes prior defaults with smarter initialization and clearer guardrails. The new default model carries a promise of markedly reduced hallucinations, a milestone for risk-sensitive deployments. System cards, safety disclosures, and governance signals converge into a transparent framework that invites developers to audit, compare, and reason about behavior. The result is not merely a technical refinement; it is a recalibration of trust—the premise that high-stakes AI should reveal its assumptions, boundaries, and failure modes with unprecedented clarity.

Read on TechCrunch AIOpenAI’s GPT-5.5 Instant coverage: less hallucination, smarter clustering

Coverage notes from the field emphasize a sharper factual grounding. Fewer hallucinations conjointly with smarter clustering of contexts sharpen the model’s reliability in risk-tuned environments. The narrative is not about a single capability, but about a system-level improvement: evaluation protocols, governance alignment, and a more disciplined approach to confidence estimation. For developers, this unlocks a larger swath of use cases previously constrained by risk—not merely a better model, but a more transparent relationship with users who deserve to know when an answer is a forecast, a guess, or a citation. The era of “trust but verify” receives a robust upgrade.

Read on The VergeEtsy embraces ChatGPT app integration for shopping experiences

A storefront becomes a narrative. Etsy’s integration with ChatGPT transforms product discovery into a conversational journey—personalized storefronts, contextual recommendations, and conversational shopping that nudges buyers toward a more intimate, AI-assisted interaction with commerce. It’s an early indicator of how consumer AI is merging with the fabric of everyday purchasing. Yet the design challenge remains: how to balance seamless assistance with user autonomy, avoiding the trap of over-curation or opaque decision-making, and ensuring privacy across everywhere a buyer’s data travels.

Read on TechCrunch AIGoogle, Microsoft, and xAI agree to pre-deployment government reviews of new models

A quiet revolution in safety governance unfolds as major players commit to pre-deployment evaluations with CAISI. The objective is not merely to check boxes but to co-create a shared safety vocabulary across governance, safety, and standards. In practice, this means more rigorous risk assessment, transparent testing protocols, and a willingness to pause deployment when misalignment appears. The result could be a calmer market for AI—one where speed is balanced by accountability, and where regulatory collaboration helps define acceptable risk thresholds before products reach end users. It’s governance-as-a-partner, not governance-as-guardrail.

Read on The VergeGPT-5.5 System Card and OpenAI safety disclosures anchor new model era

The era of opacity recedes as system cards and explicit safety disclosures accompany a new wave of models. OpenAI codifies a framework where capabilities, limitations, and safety tradeoffs are documented in a machine-readable format that developers can inspect and compare. The practice reframes transparency from a regulatory checkbox into a living contract with users and enterprises. The artifacts themselves become governance instruments—tools that invite scrutiny, enable auditing, and empower procurement teams to align deployments with policy and risk appetite.

Read on OpenAI BlogOpenAI and PwC reimagine the CFO with AI agents

The finance function becomes a testbed for autonomy. OpenAI’s partnership with PwC envisions AI agents handling workflows, forecasting, and governance controls that previously required human coordination. The aim is not to replace human insight but to augment it with principled automation, scenario planning, and traceable decision trails. This shift presses governance into the heart of corporate finance—the need for auditability, bias checks, and robust controls as workflows scale, vendors blend, and data flows cross-border and cross-system.

Read on OpenAI BlogLive low-latency voice AI at scale: OpenAI’s engineering feat

The acoustic edge of AI becomes a performance frontier. OpenAI details the engineering choices that deliver low-latency, high-fidelity voice AI at scale—from WebRTC architecture to streaming optimizations and robust congestion control. In practice, this translates to near-real-time conversations with agents, customers, and devices, underpinned by a design that keeps latency predictable even as traffic spikes. It’s not just speed; it’s a quality-of-conversation upgrade that preserves nuance in breath, cadence, and intent while maintaining safety and auditable behavior.

Read on OpenAI BlogXbox CEO ends Copilot AI development and overhauls leadership

A rare moment of corporate recalibration: leadership takes the baton, and a flagship initiative is retired or repurposed. The Copilot pivot signals a broader shift in Microsoft’s gaming AI strategy—priorities refocused, teams realigned, and new guardrails defined for product development, safety, and alignment with user expectations. It’s a reminder that even in a market that rewards speed, sustainable momentum depends on disciplined governance, disciplined product language, and a roadmap that can absorb the friction that comes with ambitious experimentation. The hazard, of course, is losing the emotional clarity of a long-running project; the payoff is a platform that can adapt with sharper intent.

Read on DexertoBlink – AI Assistant. Knowledge Destination

A curio in the gallery of AI tools emerges: Blink, an assistant that feels like a knowledge destination—an interface optimized for rapid retrieval, synthesis, and interaction. While the article link sits behind a Hacker News pointer, the concept embodies a broader trend: turning AI into navigable knowledge architectures rather than isolated tasks. Expect debates about provenance, data fidelity, and memory governance to surface as this idea moves from a hackathon file to real-world adoption.

Read on BlinkAI Tools Directory and Comparison Platform

A map for procurement in a dense forest of tools. The directory-and-comparison platform represents a market response to buyers overwhelmed by choice: a catalog, evaluation criteria, and transparent comparisons become governance tools in their own right. As enterprises adopt more AI agents and pipelines, such platforms help define interoperability standards, licensing boundaries, and procurement governance—reducing risk and accelerating informed decision-making across teams and vendors.

Read on ComparatifStop Writing Code: The Full-Stack AI Architect

A manifesto for a future where human designers sketch architecture while AI architects assemble modules, orchestrations, and no-code workflows. The piece argues for a full-stack view of AI, where engineering, governance, risk, and product design converge. It’s less about abandoning developers and more about expanding the toolkit—enabling teams to compose, test, and deploy with safety rails and, crucially, with a map of how every component interoperates. In a world that prizes speed, this concept asks for a discipline that ensures that speed is guided by scalable, auditable, and maintainable systems.

Read on Jake SchwartzSummarized stories

Each story in this briefing links to the full article.

Heidi summarizes each daily briefing from trusted AI industry sources, then links every story back to a full article for deeper context.