AI Pulse March 28, 2026 — OpenAI momentum, Claude code dynamics, and the evolving agent ecosystem

A focused look at OpenAI's fundraising and in-car AI, Claude/Anthropic code dynamics, and the rapid rise of AI agents and vector-enabled workflows that are reshaping enterprise AI.

The accelerator pedal of AI has slammed to the floor. In a day when headlines sprint from frontier finance to in-car assistants and Tamagotchi-like agents, the landscape feels less like a speedrun and more like a tectonic shift. OpenAI tilts the board with a monster fundraise, Claude code leaks expose the armor and nerves of modern agent design, and a growing ecosystem of autonomous helpers edges into everyday life—banking, driving, shopping, and even spaceflight. This is not a moment to watch from the bleachers; it’s a moment to walk through, breath by breath, as a living digital gallery rewires what work, risk, and governance feel like in 2026.

Today’s briefing threads these threads into a single corridor: capital velocity meeting code governance meeting agent-enabled commerce, with mobility, memory, and multimodal tools marching in step. It’s a day for looking at the seams—the places where hype meets practice, where safety meets speed, and where the tools you use to reason about AI become the tools you rely on to survive the next five years.

The arc is clear: the AI economy isn’t stabilizing; it’s mutating. The frontier computation, enterprise-scale deployments, and on-device, offline agent capabilities are converging with a culture of rapid iteration—and a heightened appetite for governance that can actually keep pace with the speed of building. It’s a gallery show where each piece isn’t just art; it’s a blueprint for the next wave of human-AI collaboration.

| Metric | Value | Signal |

|---|---|---|

| OpenAI frontier fundraise | 122B | ↑ |

| Retail investor funding tranche | 3B | ↑ |

| Robotaxi sentiment score (Baidu incident) | -6 | ↓ |

The Claude Code Chronicle: Leaks, Layers, and the Safety Margin

In a week where governance and safety are no longer abstract concepts but everyday topics, the Claude Code leak saga has revealed a stubborn truth: the moment you expose the inner wiring of an adaptive agent, you invite scrutiny, debate, and a reckoning of limits. Anthropic faced a sprawling takedown that wiped thousands of GitHub repos—an emergency cleanup born of a leak that spilled more questions than answers. The move, described as an accident by the company, underscored how static repositories and dynamic agents live in an ever-tightening feedback loop between control and capability.

Ars Technica’ s close read of the Claude Code leak detail points toward strategic glimpses—interactions, on-device/offline capabilities, and potential new modes of agent behavior. If an always-on agent is now plausible, the governance risk spectrum broadens: safety redlines, audit trails, and user consent become operational guardrails rather than abstract ideals. The Verge AI’s reporting on the leak’s Tamagotchi-like “pet” and an always-on agent makes the design decision feel intimate and urgent: what is trusted, what is monitored, and how do you unplug a system that lives on a continuous pulse?

The leak hints at an always-on, Tamagotchi-style agent — a design choice that tests trust, safety, and user consent in real time.

— The Verge AI

Across the ecosystem, the story of Claude’s leak isn’t isolated to one lab or one incident. TechCrunch coverage ofAnthropic’s leak and governance challenges connects with MIT Technology Review’s broader examination of how agentic systems must be anchored in truth, context, and accountability. It’s a reminder that as agents become more capable, the questions we ask of them—who governs their decisions, how we audit their actions, and what happens when something goes wrong—become citizen-level concerns rather than back-office concerns.

Source references: TechCrunch AI, Ars Technica, The Verge AI, MIT Technology Review

In-Car AI: The Dashboard Becomes a Conversation

AI is no longer bound to a cave of data centers; it’s riding shotgun in real time. CarPlay’s native access to ChatGPT turns the car into a mobile extension of the AI workspace, enabling hands-free conversations, tasks, and navigation that feels less like a tool and more like a companion. The shift is less about one app and more about a model of mobility where privacy, UI choreography, and context become the map for an in-car AI ecosystem.

Google and Apple have long teased seamless mobility experiences; now, the AI layer is not an optional garnish but a core operating principle. The Verge’s CarPlay integration story and related coverage on AI-enabled in-car workflows paint a future where driving lanes double as learning lanes, where prompts become prompts for road-ready decisions, and where the line between human and machine agency blurs in the windshield light.

CarPlay gains native access to ChatGPT, expanding in-car AI capabilities and redefining driver interaction with AI assistants while commuting.

— The Verge AI

As mobility vendors push these experiences outward, there’s a parallel tension: the privacy implications of in-car assistants, the UI/UX choreography that makes voice interfaces feel natural, and the governance frameworks required to ensure these agents don’t misinterpret context in the high-stakes environment of the road.

Source references: The Verge AI, TechCrunch AI, The Verge AI (CarPlay

Toolkit Maturation: From Prompt to Deployed AI Agent in Minutes

When tooling accelerates from concept to production, the velocity of AI becomes a feature rather than a bug. The LlamaAgents Builder workflow captures this shift: a developer sketches a prompt, and in minutes a living agent can be deployed to handle real-world tasks. The trajectory isn’t merely about faster prompts; it’s about governance and safety baked into the tooling itself—auditability, deployment governance, and real-world risk checks embedded in every step.

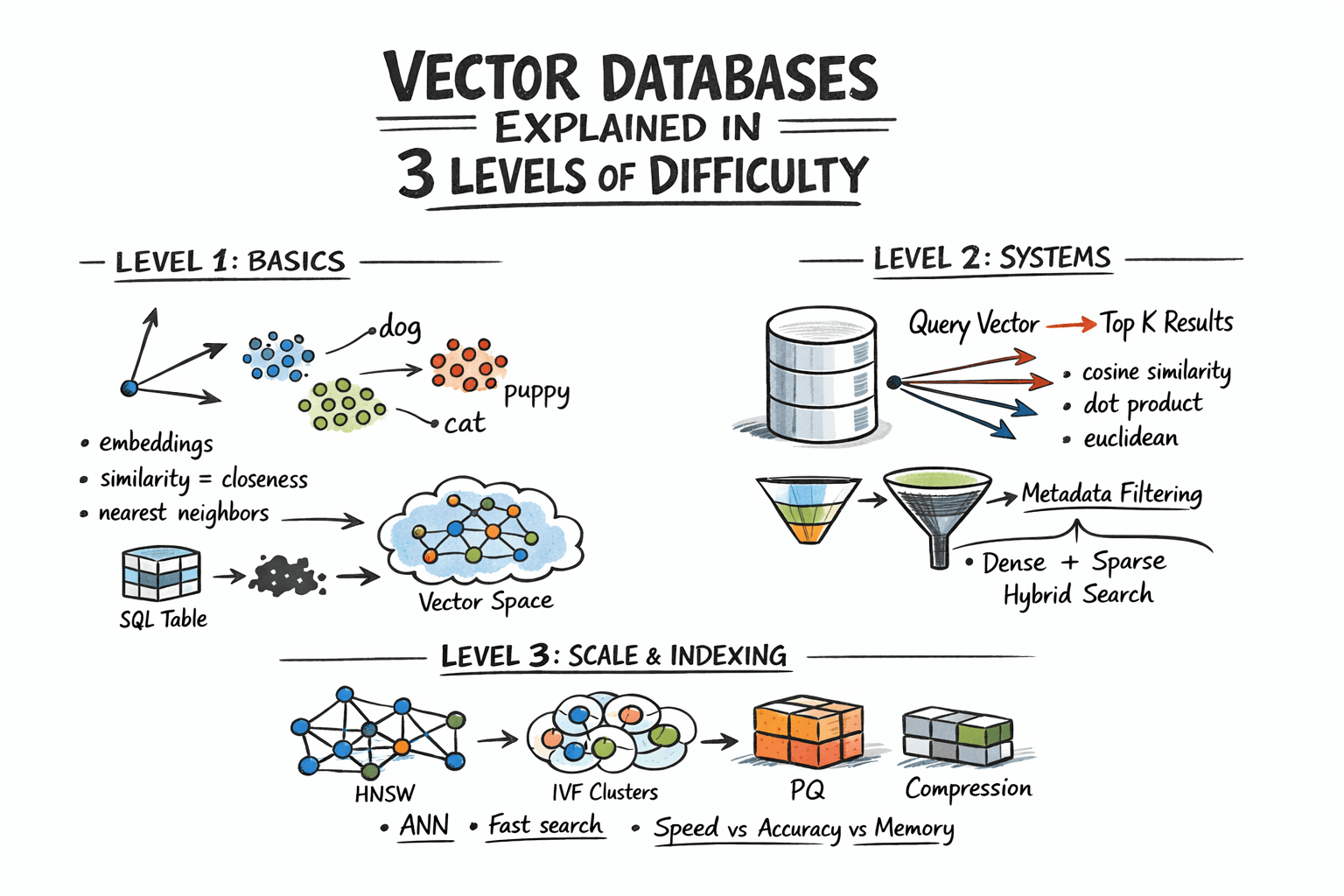

As more teams adopt agent-building toolkits, the boundary between product development and AI behavior increasingly resembles an organizational capability, not a single feature. The shift toward post-training tooling, MLops governance, and robust agent design is echoed across the ecosystem—from Vector Databases that scale retrieval to post-training libraries that keep models aligned with evolving field needs.

From prompt to deployed AI agent in minutes—the LlamaAgents Builder mirrors a broader shift: tooling that blurs the line between product and agent, with governance baked in.

— Machine Learning Mastery

Source references: Machine Learning Mastery, ML Mastery (Vector DB Primer), Hugging Face Blog

The Toolkit Era: Vector Databases, Post-Training Libraries, and Agent-Driven Commerce

The current wave isn’t just about smarter models; it’s about smarter workflows. Vector databases turn retrieval into a scalable, real-time memory for AI agents, enabling them to fetch context on demand with precision. The trio of articles about vector databases, post-training libraries like TRL v1.0, and the practical LlamaAgents Builder show a market moving from “what can AI do?” to “how do we keep AI aligned, auditable, and deployable at scale?” This is the architecture of trust, built brick by brick, with toolchains that enforce governance as a feature rather than a constraint.

- Vector memory matters. Three-level primer illustrates how proximity and retrieval impact agent accuracy and efficiency.

- Post-training tooling is a new spine for production AI. Libraries designed to stay current with field needs avoid retraining overhead and accelerate updates.

- Agent tooling is maturing into enterprise playbooks. Builder workflows convert prompts into deployed agents, with governance baked in.

Agentic commerce runs on truth and context—the factory line for reliable, auditable automation.

— MIT Technology Review

Source references: Machine Learning Mastery, Hugging Face Blog, Machine Learning Mastery

The Artemis Point: AI Safety in High-Stakes Space Operations

If the Earthbound AI lab is the living room, Artemis II is the observatory. NASA’s bold mission tests AI-assisted systems in the crucible of complex engineering and mission control. The AI safety narratives formed in these aerospace corridors are not ornamental; they are the rulebook for reliability, fault tolerance, and governance in mission-critical domains. The story isn’t about kicking the tires of a rover; it’s about building confidence in AI-augmented procedures that hold human life in the balance.

Artemis II’s AI-assisted systems are a proving ground for safety, governance, and reliability at the outer edge of human exploration.

— Ars Technica

Source references: Ars Technica, MIT Technology Review

From Prompt to Deployed Agent: The LlamaAgents Builder

Speed is not merely a virtue; it’s a requirement. The LlamaAgents Builder demonstrates how a prompt evolves into a deployed AI agent—an emblem of a broader maturation: tooling that allows teams to harness AI for real-world tasks with governance as an ingrained practice. The practical side of this transformation is a new operational agility: automated workflows in banking, commerce, and customer service becoming a standard rather than a privilege.

Builder workflows convert prompts into deployed agents, accelerating production AI while embedding governance at the core.

— Machine Learning Mastery

Source references: Machine Learning Mastery, ML Community

Commerce, Truth, and Context: Agentic Transactions in the Real World

As agent-enabled commerce becomes a baseline capability, the focus shifts from “can we automate this?” to “how can we guarantee truthful, context-aware interactions?” The MIT Technology Review grounds this shift in a careful analysis of the tradeoffs between speed and trust: agents must not only be fast; they must be auditable, honest in their decisions, and transparent about limitations. The discourse around agentic commerce is moving from spectacle to stewardship, and the stakes are rising accordingly—especially in consumer-facing scenarios where consent and privacy collide with convenience.

Agentic commerce runs on truth and context—this is the design requirement for trustworthy automation in consumer workflows.

— MIT Technology Review

Source references: MIT Technology Review, MIT Technology Review (Hype Index)

Soundtracks of Change: AI Music, Policy, and Patent Winds

AI-generated music sits at the interface of creativity and regulation. The Verge coverage captures the policy edge as artists and platforms test new tooling, copyright questions, and the cultural impact of machine-driven composition. It’s a microcosm of a larger economy where AI capabilities flow into cultural industries, raising questions about authorship, compensation, and the boundaries of remix as a legal and artistic practice. The music AI moment isn’t a side show; it’s a bellwether for how policy, platform design, and creative practice will co-evolve in the coming year.

Source references: The Verge AI

The Horizon: A World of Agents, Cars, and Cosmic Confidence

The next wave arrives not as a single invention but as an ecosystem flourish: AI agents that learn from structured data and user consent, cars that talk back with nuance, and governance frameworks that can bend without breaking. The OpenAI fundraising beat underscores the economics: frontier compute and enterprise AI deployments require scalability, risk management, and governance at scale. The Artemis II mission, meanwhile, hints at a future where AI systems operate not only in software but in the critical systems that safeguard human life in space and on Earth. And the continued tension between hype and reality—captured by MIT Tech Review’s hype index and the steady drumbeat of high-profile governance stories—reminds us that the biggest AI achievements will be measured not just by speed, but by safety, accountability, and stewardship.

In this world, the lines between tool and teammate blur. Gradient Labs’ bank-facing AI accounts, followed by a chorus of agent-based automation in retail, finance, and services, show a path toward enterprise-grade, trusted automation that can scale without sacrificing governance. Bluesky’s Attie, a social AI assistant for feeds and personalization, demonstrates how consent and context can empower users while keeping platforms accountable. And across the board, the push toward post-training libraries and robust vector memory architectures suggests that the industry is building the memory and governance rails required for AI to operate reliably in dynamic real-world settings.

We are witnessing not a single breakthrough but a new operating system for AI—one that blends memory, governance, and human-centric design into everyday workflows.

— Industry analyst synthesis

Source references: OpenAI Blog, The Verge AI, MIT Technology Review, TechCrunch AI

Where AI once came in waves, it now travels as a fabric: weighted, monitored, and collaboratively governed—ready to fold into daily life without erasing responsibility.

— Daily briefing synthesisSummarized stories

Each story in this briefing links to the full article.

Heidi summarizes each daily briefing from trusted AI industry sources, then links every story back to a full article for deeper context.