March 30, 2026 AI News Digest — Monday momentum in OpenAI, Claude, and AI agents

A focused briefing on today’s OpenAI-led shifts, Claude traction, agent-enabled workflows, and automation trends shaping research, policy, and enterprise deployment.

The future is being deployed in minutes, not months. This Monday, the neural tide isn’t just rising—it’s spilling into every corner of work, play, and care. Today’s AI briefing isn’t a digest of headlines; it’s a walk through a living gallery where experiments become workflows, where copilots evolve into teammates, and where the edge between tool and partner keeps dissolving.

OpenAI, Claude, and a constellation of autonomous agents are no longer concepts whispered in incubators; they’re the plumbing of modern organizations. Across the long neon corridor of 2026, the tempo has shifted from “build it” to “deploy it,” from isolated experiments to interconnected ecosystems. If yesterday’s AI was a single instrument, today’s AI is an orchestra—composing, adapting, and responding in real time to the texture of our work and the texture of the world itself.

As you wander this briefing, notice the threads: rapid agent deployment that folds prompts into functioning workflows; consumer-grade copilots catalyzing enterprise-scale capability; governance and safety becoming design constraints rather than afterthoughts; and a global tapestry where AI tools shape relief operations, gaming accessibility, and creative expression alike. This is the Monday momentum—the momentum of a field learning to live up to its own promise.

| Metric | Value | Signal |

|---|---|---|

| Images with visuals in digest | 4 | ↑ |

| Positive sentiment sources | 4 | ▲ |

| Negative sentiment sources | 2 | ▼ |

| Net sentiment delta | +2 | ▲ |

The Rise of Autonomous Agents

In a world where a prompt can birth a workflow, the LlamaAgents Builder stands as a watermark of momentum. What began as a clever trick in prompt engineering now acts as a conduit from ideation to orchestration—a bridge from human intent to machine action. The story isn’t merely about speed; it’s about a new discipline: engineering with intent that anticipates context, cross-application needs, and the rhythms of business operations. The builder’s promise—turning a line of text into a deployed agent—signals a shift from “hack-ahead” to “engineer-in-time.”

The architecture behind it—what practitioners call MCP, or multi-context processing—champions a future where a single agent can juggle tasks across domains, switching contexts with a grace that used to require a team of specialists. It’s not magic; it’s the normalization of a pattern: the agent as a first-class citizen in the software stack, one that can ingest prompts, assemble workflows, and execute them with a reliability that used to demand human oversight at every turn.

For teams watching this space, the implication is both practical and existential. Deployments in minutes redefine project timelines; the cost of prototyping compresses into hours; and governance questions—data freshness, privacy, and safety—become design constraints embedded into the scaffolding of the agent itself. The lab’s edge is shifting from clever ideas to scalable platforms, from novelty to habit. And that shift is what makes this Monday momentum feel less like a blip and more like a tectonic plate reorientation.

- Prompt-to-deployed-agent workflows are accelerating the path from idea to execution.

- MCP enables agents to function across multiple contexts without retraining from scratch.

- Architectures are evolving to bake governance, privacy, and safety into the agent’s behavior by design.

- The ecosystem is coalescing around building blocks—tools, plugins, and orchestrators—that reduce the friction of deployment.

From prompt to deployed AI agent in minutes

— Machine Learning Mastery

Claude’s Popularity: Momentum in Consumer AI

Claude’s ascent isn’t a niche story about a single product; it’s a reflection of a broader appetite for consumer-ready copilots and enterprise-grade assistants that blur the line between assistant and colleague. The narrative is simple on the surface: subscriptions are rising, and with them, the expectation that AI can shoulder more of the cognitive load in daily work. The cadence of growth suggests a demand curve that is less about novelty and more about reliability, consistency, and the feel of a tool that tunes itself to the user’s work.”

The momentum isn’t happening in a vacuum. It sits alongside an ecosystem where orchestration, governance, and deployment patterns are maturing. The paid tier signals a willingness to invest in a platform that promises to become, not merely a feature, but a baseline capability for teams navigating complex decision flows, customer interactions, and data governance requirements. In other words, Claude’s popularity is not just about a single product; it’s about a shared expectation that AI copilots will be a standard, long-tail partner in the enterprise stack.

What this signals for markets and product teams is a subtle shift in the value equation: sustainable, paid adoption is tied to signals of enterprise readiness, supporter ecosystems, and assurances around data handling and safety. That’s not a footnote; it’s the drumbeat of a new consumer-to-enterprise continuum for AI copilots, where the most successful offerings will be those that weave human-centered design with robust governance and practical integration points.

- Paid subscriptions indicate durable demand for consumer-ready AI copilots with enterprise capabilities.

- Enterprise-grade features—the guardrails, governance, and data controls—become a differentiator, not a luxury.

- Successful copilots blend strong UX with reliable security and compliance support.

- The market is building a pipeline from consumer familiarity to enterprise trust.

Claude’s paid subscriptions are growing rapidly

— TechCrunch AI

Source: Anthropic’s Claude popularity with paying consumers is skyrocketing

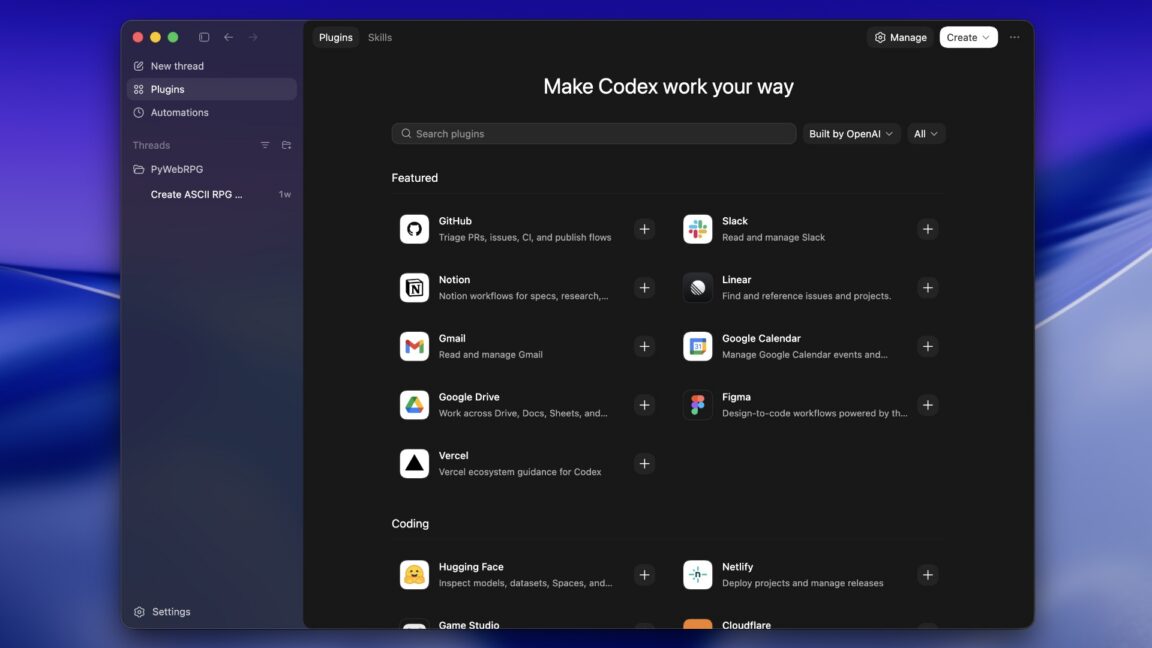

Developer Stack: Codex Plugins and the MCP Frontier

OpenAI’s plugin expansion for Codex is less a discrete upgrade and more a rearchitecting of the dev stack. Plugins close critical gaps—bringing in external capabilities, data sources, and domain-specific tools—so that Codex can transcend “coding assistant” into a central development ecosystem. The project isn’t merely about automation; it’s about enabling multi-context workflows that smell like real production—where code, data, and infrastructure coexist within coherent, MCP-enabled pipelines. The argument isn’t that Codex is perfect today; it’s that Codex is becoming the scalable spine of a more autonomous, more capable developer stack.

As teams explore these plugins, they experience the shift toward a holistic stack where automation, testing, deployment, and monitoring start to share the same lifecycle. The plugins don’t just fill gaps; they redraw the map of what “coding” means in a world where an assistant can synthesize requirements, fetch data, validate constraints, and even drive integration tests. This isn’t science fiction; it’s the everyday pragmatism of teams who need to move faster while staying aligned with governance and security mandates.

In the broader arc, Codex plugins become a case study in how software tooling evolves: from a single assistant to a platform, from task automation to orchestration, from dry lines of code to living, connected workflows. It’s a testament to how quickly a strategic capability can shift the economic math of software delivery, turning a once-tentative bet into a core competitive advantage.

- Codex plugins extend Codex from coding aid to holistic development stack.

- Plugins seed sharper MCP-enabled workflows, enabling cross-context automation.

- The shift reframes software delivery as an integrated lifecycle across brains, bots, and infrastructure.

- Governance and security become design constraints baked into plugin-enabled workflows.

OpenAI’s plugins to Codex: closing gaps and moving beyond coding

— Ars Technica

Source: OpenAI’s plugins to Codex: closing gaps and moving beyond coding

Gaming, Accessibility, and the Human Side of AI

AI isn’t only about productivity suites and enterprise pipelines; it is also shaping how we play and how we access digital worlds. The Wolfenstein 3D retrospective—played with one hand in 2026—offers a quietly radical lens on accessibility, designer intent, and the enduring magic of classic game design. The piece isn’t nostalgia for nostalgia’s sake; it’s a reminder that accessibility can illuminate how we build AI systems that are inclusive from the pixels up. If the 1990s’ design constraints forced compromise, 2026’s AI-enabled interfaces can invert that constraint into possibility: one-handed play, adaptive controls, and interfaces that learn user preference without crossing privacy lines.

What looks like a clever throwback is, in truth, a case study in the social design of AI. Accessibility isn’t an afterthought; it’s a lens on how AI should reason about user capability, context, and intent. The gaming realm—where latency, input models, and feedback loops are felt profoundly—gives us a textured blueprint for broader AI adoption: design for diversity of use, build robust fallback behaviors, and test for edge cases early and often. The lesson echoes beyond the screen: accessibility is not a constraint; it is a design metric that augments resilience and trust in AI systems.

- Accessibility in gaming foreshadows inclusive AI design across industries.

- One-handed play and adaptive controls highlight the need for resilient input models in AI tools.

- Classic game design becomes a proving ground for modern AI interaction patterns.

Playing Wolfenstein 3D with one hand in 2026

— Ars Technica

The Horizon: Looking Ahead

If today’s briefing feels cinematic, that’s because the near future is not a series of isolated breakthroughs but a choreography. The next acts of AI progress will hinge on the elasticity of deployment, governance by design, and the humane framing of capability. We’re watching five interlocking currents converge into a recognizable arc: the acceleration of agent-enabled workflows, the maturation of copilots as trusted partners, the governance and data governance scaffolds that keep risk aligned with reward, the extension of developer ecosystems via plugins and MCP-enabled orchestration, and the persistent undercurrent of AI’s reach into global humanitarian and cultural domains.

First, the agent layer will embed itself in every core process—from procurement to customer service to field operations—driven by platform choices that make “how” a question of standardization rather than a bespoke hack. Second, copilots will transition from being extraordinary assistants to becoming standard operating capabilities—this is the shift from “we have a bot” to “the bot is part of how we do work.” Third, governance will stop being a box to tick and start becoming a continuous design discipline: privacy by default, safety by design, and transparency built into every decision path an agent can take. Fourth, plugin ecosystems and MCP-enabled tooling will not merely accelerate development; they will redefine the economics of software delivery, compressing risk and enabling more complex coordination between humans and machines. And fifth, the human dimension—privacy, safety, and inclusivity—will demand more thoughtful interfaces, compelling narratives, and better controls to ensure AI remains a force for trust, not a vector of disruption.

In this gallery, the narrative isn’t only about what AI can do—it’s about how we choose to live with it, and how we shape the culture around its deployment. The Monday momentum isn’t a sprint; it’s a practice. It asks: how do we design, govern, and deploy AI so that the throughput of progress aligns with the ethics of stewardship? The answer will emerge as teams across industries embrace cross-disciplinary collaboration, as developers learn to thread governance into every deployment, and as the world discovers new ways to turn AI from a phenomenon into a responsible partner in our shared work and play.

As you close this briefing, carry with you a question: what is the design discipline for trust in an era where prompts become action, and where action becomes reliable workflow? The next chapter is not about more power alone; it’s about better alignment, deeper accountability, and a future where AI’s promise is realized in the everyday rhythms of work, education, and humanity itself.

Summarized stories

Each story in this briefing links to the full article.

Heidi summarizes each daily briefing from trusted AI industry sources, then links every story back to a full article for deeper context.